Power, Capital, and Code: AI’s 2026 Playbook

From a 1.2‑gigawatt Texas data center to a 15‑billion‑dollar venture haul, we break down the money, compute, and policy shaping AI’s next phase. Plus, DeepSeek’s coding model tease and OpenAI’s massive equity pool for talent.

Episode Infographic

Show Notes

Welcome to AI News in 10, your top AI and tech news podcast in about 10 minutes. AI tech is amazing and is changing the world fast, for example this entire podcast is curated and generated by AI using my and my kids cloned voices...

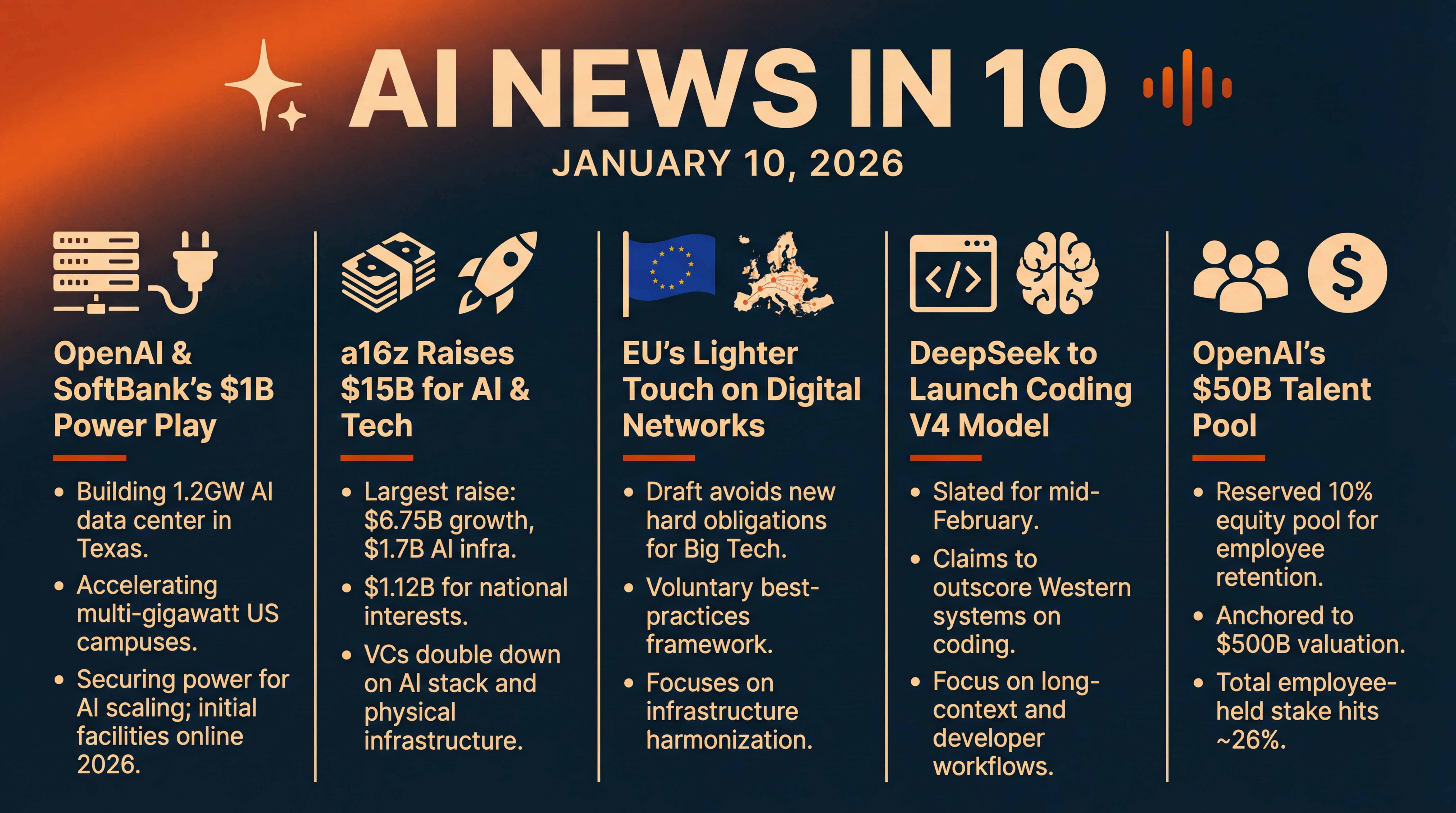

It’s Saturday, January 10, 2026, and today’s lineup is all about scale—money, compute, and ambition. OpenAI and SoftBank are writing a billion‑dollar check to build a 1.2‑gigawatt data center in Texas... Andreessen Horowitz has closed a monster 15 billion dollars to fuel AI and strategic tech... Brussels is floating a lighter‑touch draft on digital networks... China’s DeepSeek is teasing a coding‑focused model launch next month... and OpenAI is carving out a massive 50 billion dollar equity pool to keep top talent on board. Let’s dive in.

[BEGINNING_SPONSORS]

Story one: OpenAI and SoftBank are putting real steel—and silicon—behind the AI infrastructure boom. They’re investing one billion dollars, split evenly, into SB Energy, SoftBank’s infrastructure arm, to accelerate multi‑gigawatt data center campuses in the U.S. The headline project is a 1.2‑gigawatt facility in Milam County, Texas, where OpenAI has signed a long‑term lease and tapped SB Energy to build and operate the site. Initial facilities start coming online this year. That’s a staggering amount of power—on the order of hundreds of thousands of homes—to feed AI training and inference clusters.

Why now? Power is the constraining resource for AI. Analysts have warned that data center electricity demand could outpace utility build‑outs for years, pushing operators to secure generation and grid capacity up front. OpenAI and SoftBank are signaling they won’t wait for the grid to catch up—they’re vertically aligning compute build‑outs with energy delivery so model roadmaps don’t stall. The partners also say the Texas campus is designed to minimize water usage, and includes local grid investments and workforce development. SB Energy adds that several multi‑gigawatt campuses are under construction, with services beginning in 2026.

It’s also a notable governance move. The companies frame this as part of “Stargate,” a multi‑year, multi‑hundred‑billion‑dollar push to stand up U.S. AI infrastructure. OpenAI says SB Energy will also be a customer, rolling out ChatGPT and API integrations internally—an interesting two‑way relationship that pairs hyperscale customers with the builders of the underlying power and racks.

Story two: Venture dollars are flowing again—and in size. Andreessen Horowitz closed more than 15 billion dollars across five new funds, its largest raise to date. The breakdown reportedly includes 6.75 billion for growth‑stage scaling bets, 1.7 billion earmarked for AI infrastructure, and another 1.12 billion aimed at so‑called national interests, spanning defense, housing, and supply chains. For perspective, this single raise was more than 18 percent of all U.S. venture capital allocated in 2025. Talk about dry powder.

The signal here is twofold. First, LPs remain convinced that AI compute, tooling, and applications still have legs—despite whiplash valuations and last year’s bubble worries. Second, the firm is leaning into the physical side of AI—chips, data centers, and the energy layer—alongside software and agents. That mirrors what we’re seeing from corporates like OpenAI and SoftBank: if you want to ship more capable models, you need capital‑intensive infrastructure alongside clever algorithms.

Story three: a Brussels plot twist. According to people familiar with the draft, the European Commission’s upcoming Digital Networks Act is poised to avoid new hard obligations on U.S. platforms like Google, Meta, Microsoft, Amazon, and Netflix. Instead, they would join a voluntary best‑practices framework overseen by EU telecom regulators—BEREC—while the law focuses on infrastructure harmonization: spectrum licensing, pricing, and rollout guidelines. That’s a pivot from earlier pressure by European carriers for stricter mandates or payments from Big Tech to help fund networks.

If this stance holds through negotiations with member states and Parliament, it could ease near‑term compliance risk for the biggest platforms—even as other EU regimes like the Digital Markets Act and the AI Act keep tightening around competition and safety. The Commission frames the DNA as competitiveness‑focused, aiming to accelerate investments in telecom and digital infrastructure while giving governments flexibility on fiber transition timelines. Translation: carrots over sticks, at least for now.

[MIDPOINT_SPONSORS]

Story four: DeepSeek, the Chinese AI outfit that rattled markets last year with low‑cost, high‑performance models, is reportedly prepping a new model focused on code. The Information, cited by Reuters, says V4 is slated for mid‑February, with internal tests suggesting it could outscore leading Western systems on programming tasks and handle very long coding prompts. If accurate, that would make V4 a direct strike at developer workflows—think refactors across giant codebases, multi‑file context reasoning, and tool‑use orchestration.

Why this matters: Coding is where model claims meet production pain. Long‑context reliability, deterministic toolchains, and solid error handling are the difference between novelty and ROI. DeepSeek already shifted the cost narrative last year by training competitive models at lower expense; a coding‑centric model that combines long context with strong reasoning could pressure incumbents on both price and capability—especially in price‑sensitive markets where DeepSeek has been gaining share. Keep in mind, DeepSeek hasn’t commented yet, and these are pre‑launch performance claims... so we’ll watch for third‑party evaluations in February.

Story five: OpenAI isn’t just investing in servers—it’s investing in people. The company has reportedly reserved a 50 billion dollar employee stock grant pool, about 10 percent of equity, anchored to an internal valuation of roughly 500 billion last fall. That’s on top of about 80 billion dollars worth of vested equity previously issued, bringing employee‑held stakes to roughly 26 percent. While OpenAI hasn’t commented, the report dovetails with chatter about fresh financing that could push its valuation to around 750 billion. For talent markets, that’s the loudest signal yet that the compensation arms race is still in full swing.

Taken together, today’s stories sketch a clear direction for 2026. AI leaders are de‑risking their compute roadmaps by locking in power and land, VCs are reloading to back both the chips‑to‑cloud stack and mission‑critical apps, Brussels is modulating how hard it leans on new rules to keep investment flowing, challengers like DeepSeek are zeroing in on developer use cases, and OpenAI is trying to make sure its best people stick around for the long haul.

Quick recap before we go: OpenAI and SoftBank put one billion dollars into SB Energy and a 1.2‑gigawatt Texas data center... Andreessen Horowitz closes a 15 billion dollar fund suite with a heavy AI tilt... the EU’s Digital Networks Act draft looks lighter on Big Tech than expected... DeepSeek’s coding‑first V4 is reportedly weeks away... and OpenAI earmarks a 50 billion dollar equity pool to retain talent. Big money, big power, big moves—and plenty more to watch as these bets turn into shipping products and humming data halls.

Thanks for listening and a quick disclaimer, this podcast was generated and curated by AI using my and my kids' cloned voices, if you want to know how I do it or want to do something similar, reach out to me at emad at ai news in 10 dot com that's ai news in one zero dot com. See you all tomorrow.