Gigawatts, Gemini, and the Healthcare AI Race

Apple taps Google’s Gemini to supercharge Siri, Meta lays plans for country-scale compute, and Nvidia teams with Lilly on a billion-dollar AI drug lab. Plus, OpenAI buys Torch to power ChatGPT Health, while Washington turns up the heat on data center energy.

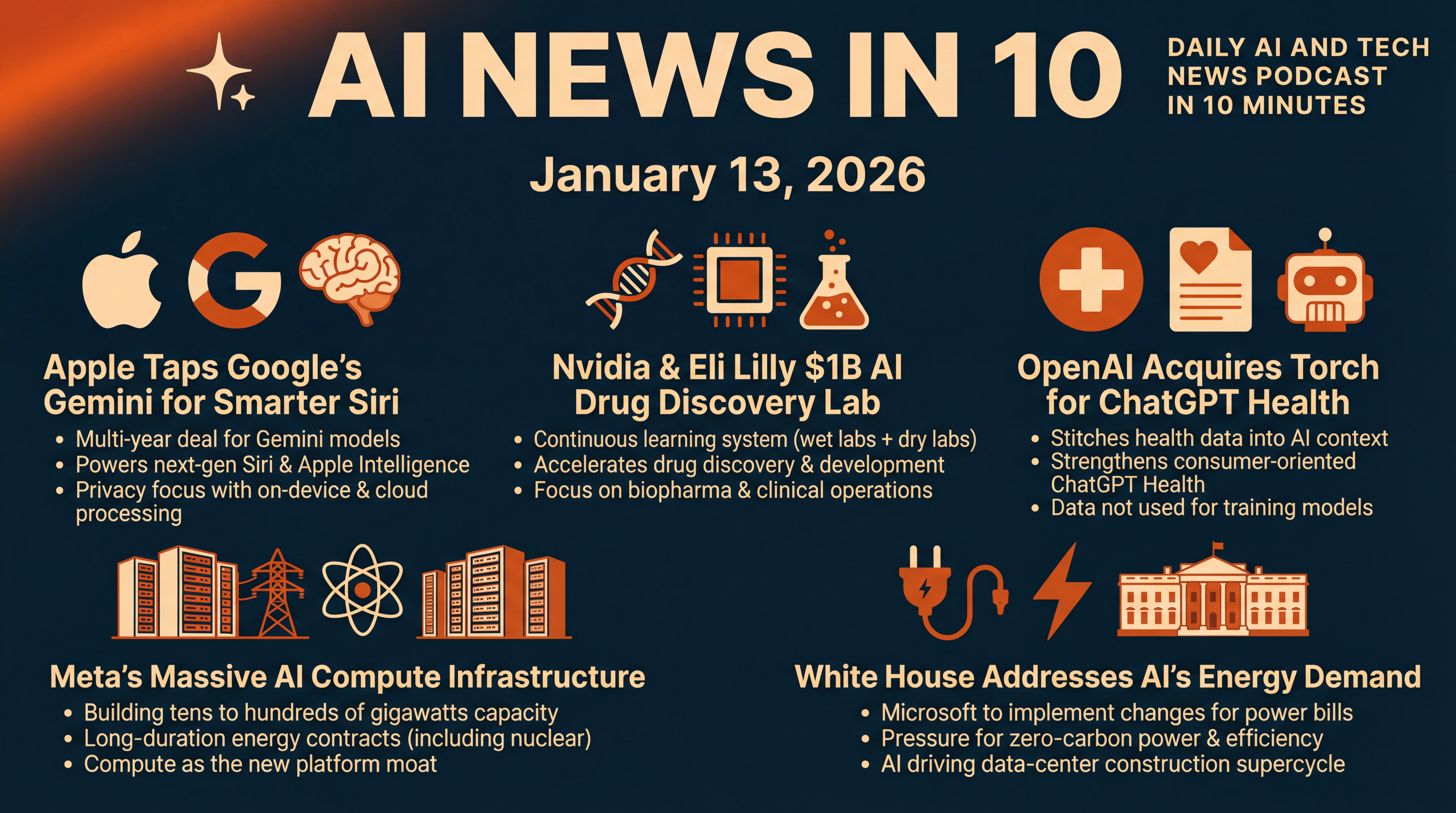

Episode Infographic

Show Notes

Welcome to AI News in 10, your top AI and tech news podcast in about 10 minutes. AI tech is amazing and is changing the world fast, for example this entire podcast is curated and generated by AI using my and my kids cloned voices...

It’s Tuesday, January 13, 2026... and it’s a big day in AI and tech.

Apple is making a headline-grabbing pivot, tapping Google’s Gemini to power a smarter Siri later this year. Meta is rolling out a massive new infrastructure program—aiming for tens, and eventually hundreds, of gigawatts of AI compute over time. Nvidia and Eli Lilly are teaming up on a billion-dollar lab to accelerate drug discovery. OpenAI is pushing deeper into healthcare with the acquisition of a tiny but strategic health-records startup called Torch. And amid soaring energy demand from data centers, the White House says Microsoft will implement changes to help keep Americans’ power bills in check.

We’ll unpack what changed, why it matters, and what to watch next. Reports from Reuters and Axios confirm the Apple–Gemini deal; Meta’s plan and Microsoft’s energy move landed today; and the Nvidia–Lilly partnership, plus OpenAI’s Torch news, round out a busy week for healthcare-centric AI.

[BEGINNING_SPONSORS]

Let’s start with the shocker from Cupertino. Apple is partnering with Google to supercharge Siri. The companies are entering a multi-year collaboration where Google’s Gemini models will power the next generation of Siri and underpin parts of Apple’s Apple Intelligence stack.

The revamped assistant is slated to arrive later this year, after Apple delayed major Siri upgrades planned for 2025. Apple says it chose Gemini after careful evaluation, while OpenAI’s ChatGPT will remain available—opt-in—for more complex queries. It’s a strategic reset for Apple… long proud of building everything in-house… now leaning on a rival’s model to close a capability gap, while promising privacy safeguards via on-device processing and Private Cloud Compute.

A few threads to watch. Apple reportedly evaluated multiple AI partners before picking Gemini, and antitrust watchers are already raising eyebrows—given Apple’s long-running default search deal with Google. Elon Musk publicly questioned the concentration of power this creates… while Apple framed the move as unlocking better experiences for over two billion active devices, without compromising its privacy stance.

Practically, expect a more context-aware Siri and broader Apple-wide AI features that tap Gemini in the cloud—while keeping personal context local. We’ll be watching how regulators react… and whether Apple eventually transitions back to its own frontier-scale models.

Second story. Meta just announced Meta Compute, a new unit dedicated to building AI infrastructure at unprecedented scale. CEO Mark Zuckerberg set the tone: Meta intends to build tens — and, over time, hundreds — of gigawatts of compute capacity this decade, powered by long-duration energy contracts, including nuclear.

The initiative is led by Santosh Janardhan, with Daniel Gross steering strategic capacity planning and partnerships, and Meta’s president, Dina Powell McCormick, focused on government and sovereign relationships. It’s a bold bet that the next advantage in AI isn’t just model quality—it’s raw, reliable compute at country scale.

Why should you care? Because Meta is framing compute as the new platform moat. The company says it’s tying up 20-year energy deals and planning data centers with power profiles rivaling small nations—a direct response to AI’s insatiable capacity needs. If Meta succeeds, it could secure a durable edge in both research and products, especially for the personal-assistant features it’s chasing. The flip side: siting, permitting, and powering these facilities will be politically charged… and could reshape energy markets and local grids along the way.

Third. Healthcare AI gets a major jolt. Nvidia and Eli Lilly will invest up to one billion dollars over five years to create a co-innovation lab in the San Francisco Bay Area.

The pitch is a continuous learning system for drug discovery—linking Lilly’s automated wet labs with Nvidia’s computational dry labs—so experiments, data generation, and model training feed one another around the clock. The lab will run on Nvidia’s BioNeMo software and its next-gen Vera Rubin architecture, aiming to compress timelines from target identification to clinical candidates.

This builds on Lilly’s claim that it has biopharma’s most powerful AI supercomputer, based on Nvidia systems—a signal that big pharma isn’t just piloting AI… it’s institutionalizing it. The lab will explore AI applications beyond discovery, into manufacturing and clinical operations, with digital twins modeling production lines and supply chains before physical changes are made. Watch for near-term benchmarks: how many programs enter preclinical development faster, and whether AI-designed molecules translate into higher clinical success rates.

[MIDPOINT_SPONSORS]

Fourth. OpenAI is quietly building the plumbing for a consumer-oriented health assistant. It just acquired Torch, a four-person startup that stitches together lab results, medications, visit notes, and wearable data into what the founders called a medical memory for AI. Reports put the deal near 100 million dollars in equity.

The team will fold into OpenAI to strengthen the newly announced ChatGPT Health—where users can upload records and connect popular wellness apps. OpenAI says health data won’t be used to train its models.

Context matters. Anthropic just introduced Claude for Healthcare, with integrations spanning CMS datasets and medical knowledge bases—escalating the arms race for HIPAA-oriented workflows and clinical admin tasks. For OpenAI, Torch helps solve a hard, underappreciated problem: wrangling fragmented, messy health data into a consistent context layer an AI can reason over. The opportunity is massive… but so are privacy expectations, regulatory scrutiny, and the need to prove clinical safety in the real world.

Fifth. The energy footprint of AI is now squarely a policy story. President Trump said today that Microsoft will implement major changes to ensure Americans don’t see higher power bills because of data centers. He added that the administration is working with leading tech firms on additional steps in the coming weeks.

Details were light, but the signal is clear—expect pressure on hyperscalers to secure long-term, zero-carbon power, invest in grid upgrades, and improve efficiency… potentially with new reporting or siting guidance.

Zooming out, AI is catalyzing a data-center construction supercycle. Analysts estimate global capacity could roughly double within four years—from about 100 gigawatts today to near 200—with AI workloads claiming a growing share, and liquid cooling becoming standard in high-density racks. That’s creating jobs and infrastructure investment… and it’s forcing thorny trade-offs for local communities and utilities. Today’s policy nudge at Microsoft won’t be the last.

Before we wrap, two quick nuggets to watch this week. First, the Apple–Google Gemini pact could face questions from competition regulators, given the companies’ existing search partnership. Developers will be eager to see how Apple exposes on-device versus cloud APIs for privacy-sensitive features.

Second, in healthcare AI, Nvidia and Lilly’s lab will be a bellwether for whether deep, co-located partnerships can move drug pipelines faster than traditional outsourcing or vendor relationships.

That’s a wrap for January 13, 2026. Apple taps Google to power a smarter Siri. Meta goes big on compute and energy. Nvidia and Lilly bet a billion on AI-driven drug discovery. OpenAI buys Torch to weave your health data into ChatGPT. And Washington zeroes in on the grid costs of AI—with Microsoft in the hot seat.

We’ll be back tomorrow with the latest moves shaping AI and tech… see you then.

Thanks for listening and a quick disclaimer, this podcast was generated and curated by AI using my and my kids' cloned voices, if you want to know how I do it or want to do something similar, reach out to me at emad at ai news in 10 dot com that's ai news in one zero dot com. See you all tomorrow.