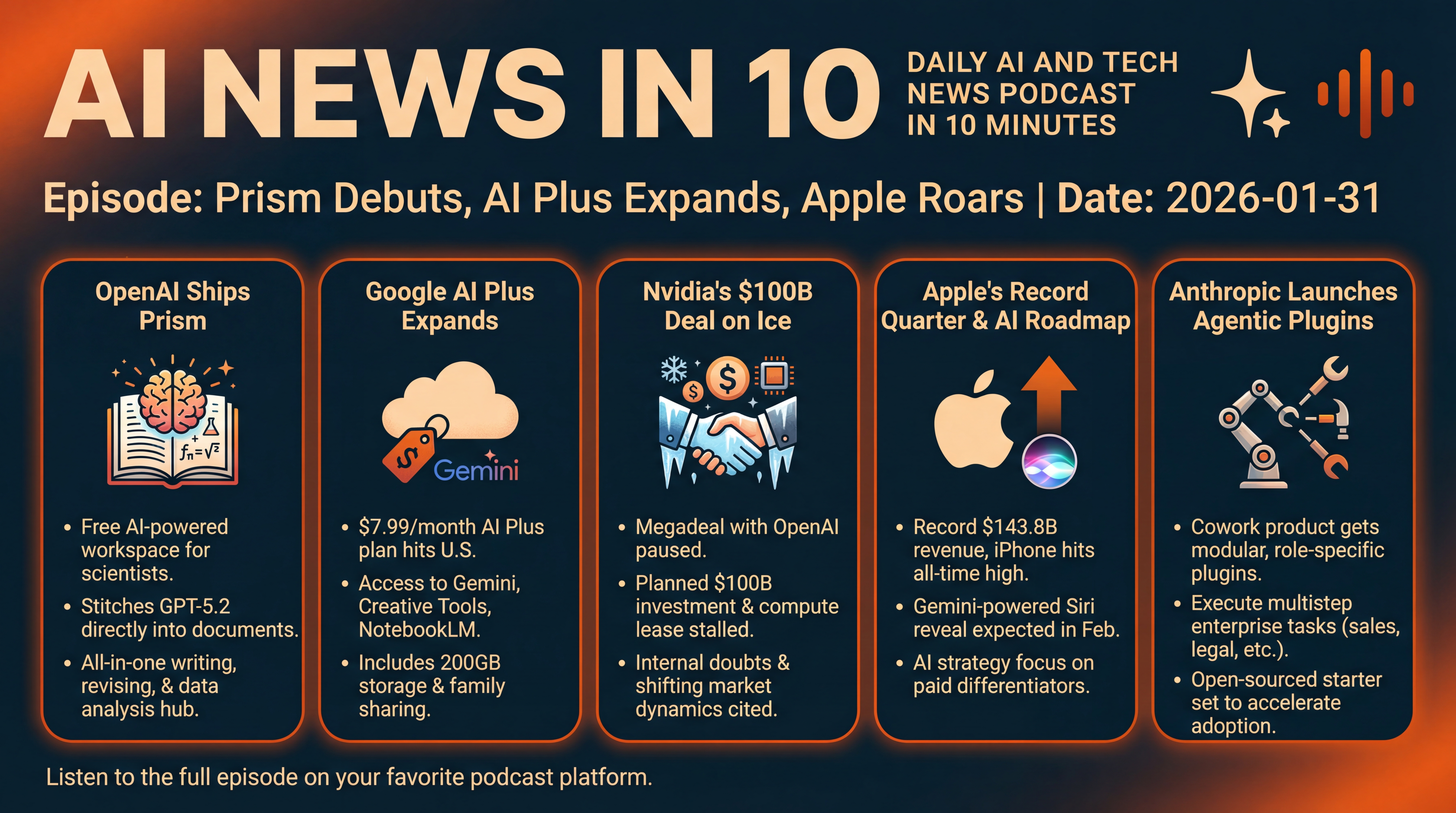

Prism Debuts, AI Plus Expands, Apple Roars

OpenAI launches Prism for scientists, Google brings its budget AI Plus plan to the U.S., Nvidia’s OpenAI megadeal cools, Apple posts a record quarter, and Anthropic adds agentic plugins to Cowork. We break down what it means for pricing, compute, and real enterprise adoption.

Episode Infographic

Show Notes

Welcome to AI News in 10, your top AI and tech news podcast in about 10 minutes. AI tech is amazing and is changing the world fast, for example this entire podcast is curated and generated by AI using my and my kids cloned voices...

Here’s what’s moving in AI and tech today.

OpenAI ships a new, free workspace for scientists called Prism — and the research world has thoughts. Google expands its cheaper AI Plus plan to the U.S. at seven dollars and ninety-nine cents a month. The Wall Street Journal reports Nvidia’s eye-popping one hundred billion dollar megadeal with OpenAI is on ice. Apple turns in a record-setting quarter and hints at where its AI is headed. And Anthropic brings agentic plugins to its Cowork product and open-sources a starter set to get enterprises going. Let’s dive in.

[BEGINNING_SPONSORS]

First up, OpenAI’s Prism. If you’ve ever wrestled with LaTeX, tracked citations in three different tools, and ping-ponged drafts over email, Prism aims to be your all-in-one scientific writing and collaboration hub.

It’s free for anyone with a ChatGPT account. It’s LaTeX-native. And — crucially — it stitches GPT-5.2 directly into the document, so the model sees your full paper context: equations, references, figures... the works. OpenAI says that turns the assistant from a sidecar chat into a co-authoring experience — drafting, revising, diagramming, even reasoning through math in context — without exporting or copy-pasting between apps.

The company built Prism on Crixet, a cloud LaTeX platform it acquired, and it’s available now. Kevin Weil at OpenAI framed it this way: 2026 could be to science what 2025 was to coding... AI moves from novelty to workflow. That’s the promise.

But the reception isn’t universally rosy. Researchers are already debating whether easy, polished manuscript tools will accelerate what some call “AI slop” — more low-quality submissions straining peer review. Prism is a writing and collaboration tool, not an autonomous researcher, but publishers and reviewers are wary of the volume effects. It’s the classic trade-off: reduce friction for careful authors... and for careless ones. Still, as a free entry point with GPT-5.2 built in, it’s likely to spread across labs and grad programs fast.

Second, Google’s AI Plus plan is now live in the U.S. for seven dollars and ninety-nine cents a month. Think of it as a lighter, cheaper tier below AI Pro — access to Gemini in the app, creative tools like Flow and video features, NotebookLM for research and writing, two hundred gigabytes of cloud storage, and family sharing for up to five members.

Google has been rolling this through emerging markets since late 2025 — now it’s expanding to all markets where its AI plans are sold, including the U.S. The positioning is clear: go after people willing to pay a little to try it, and meet OpenAI’s entry plan on price. There’s also a limited-time fifty percent off promo for the first two months.

Why this matters: the mass market for AI is price sensitive and use-case driven. If Plus unlocks enough wow moments in Gemini, NotebookLM, and creative tools, Google grows a funnel of casual users who might upgrade later. It also keeps pressure on rivals to keep entry-tier pricing — and perceived value — tight.

Third, the megadeal that lit up 2025 headlines has reportedly hit pause. The Wall Street Journal says Nvidia’s plan to invest up to one hundred billion dollars in OpenAI — tied to building roughly ten gigawatts of compute that OpenAI would lease — has stalled amid internal doubts at Nvidia and shifting market dynamics. The original framework was non-binding. Now both sides are rethinking their alignment, including the possibility of a large equity investment instead. Other reporting notes CEO Jensen Huang has emphasized privately that the earlier terms weren’t final, and that the companies remain partners even as negotiations evolve.

For context, any long-dated, infrastructure-heavy commitment at this scale collides with real-world constraints — power, land, permitting — and a fast-moving competitive landscape. If the structure changes, expect ripple effects across chip supply, cloud planning, and funding mixes for AI build-outs in 2026.

A quick framing note here: the deal’s pause doesn’t mean a divorce — more like a cooling-off period to explore structures that balance risk across compute, equity, and demand forecasts. Watch whether OpenAI shores up compute via a mix of hyperscaler commitments, sovereign funds, or diversified chip roadmaps... while Nvidia keeps capacity prioritized for a broader swath of buyers.

[MIDPOINT_SPONSORS]

Fourth, Apple’s numbers. The company delivered an all-time-record December quarter — 143.8 billion dollars in revenue, up sixteen percent year over year, and two dollars and eighty-four cents in diluted EPS, up nineteen percent. iPhone had its best quarter ever. Services hit a record. And Apple now boasts an installed base of more than 2.5 billion active devices. Apple also declared a twenty-six cent dividend payable February twelfth. Regionally, Greater China jumped nearly thirty-eight percent year over year, with Europe, the Americas, Japan, and the rest of Asia Pacific also growing. That is a monster print by any standard.

The market reaction, though, was mixed. Some analysts cheered the beat; others flagged uncertainty around Apple’s consumer-facing AI roadmap — how, when, and with whom it will monetize deeper AI in Siri, services, and devices. Reports suggest a Gemini-powered Siri reveal is likely in February, with a bigger software push at WWDC in June... but investors want clarity on what becomes a paid differentiator and what stays table stakes. In short: records today, questions tomorrow — classic Apple playbook, but AI makes the stakes higher.

And fifth, Anthropic just made its push into agentic enterprise workflows more real. The company expanded its new Cowork product with plugins — modular, role-specific packages that let Claude execute multistep tasks the way your company actually works. Think sales research and follow-ups tied to your CRM, legal document review with risk flags, customer support drafting with your tone and tools, or data analysis routines — packaged so teams can install, tweak in natural language, and share across roles.

Anthropic open-sourced eleven starter plugins to seed adoption, and says the goal is to move from helpful assistant to genuine collaborator that handles projects end to end. The feature is in research preview and available to paying Claude customers.

Why it’s notable: enterprises don’t buy models — they buy outcomes and integrations. Open-sourcing starter plugins lowers friction for IT and line-of-business teams to pilot real workflows. If Cowork proves it can safely touch systems of record and return consistent outcomes, Anthropic strengthens its case as a pragmatic, adoption-driven alternative in a crowded assistant market.

That’s it for today. We covered OpenAI’s push to streamline science with Prism... Google bringing AI Plus to the U.S.... the reported stall of Nvidia’s one hundred billion dollar OpenAI deal... Apple’s blowout quarter and AI signals... and Anthropic’s agentic plugins for enterprise Cowork.

As always, we’ll keep tracking how these moves reshape where the value in AI is actually accruing — chips, clouds, models, and the workflows that tie them together. See you tomorrow.

Thanks for listening and a quick disclaimer, this podcast was generated and curated by AI using my and my kids' cloned voices, if you want to know how I do it or want to do something similar, reach out to me at emad at ai news in 10 dot com that's ai news in one zero dot com. See you all tomorrow.