Capex Shock and the AI Supply Squeeze

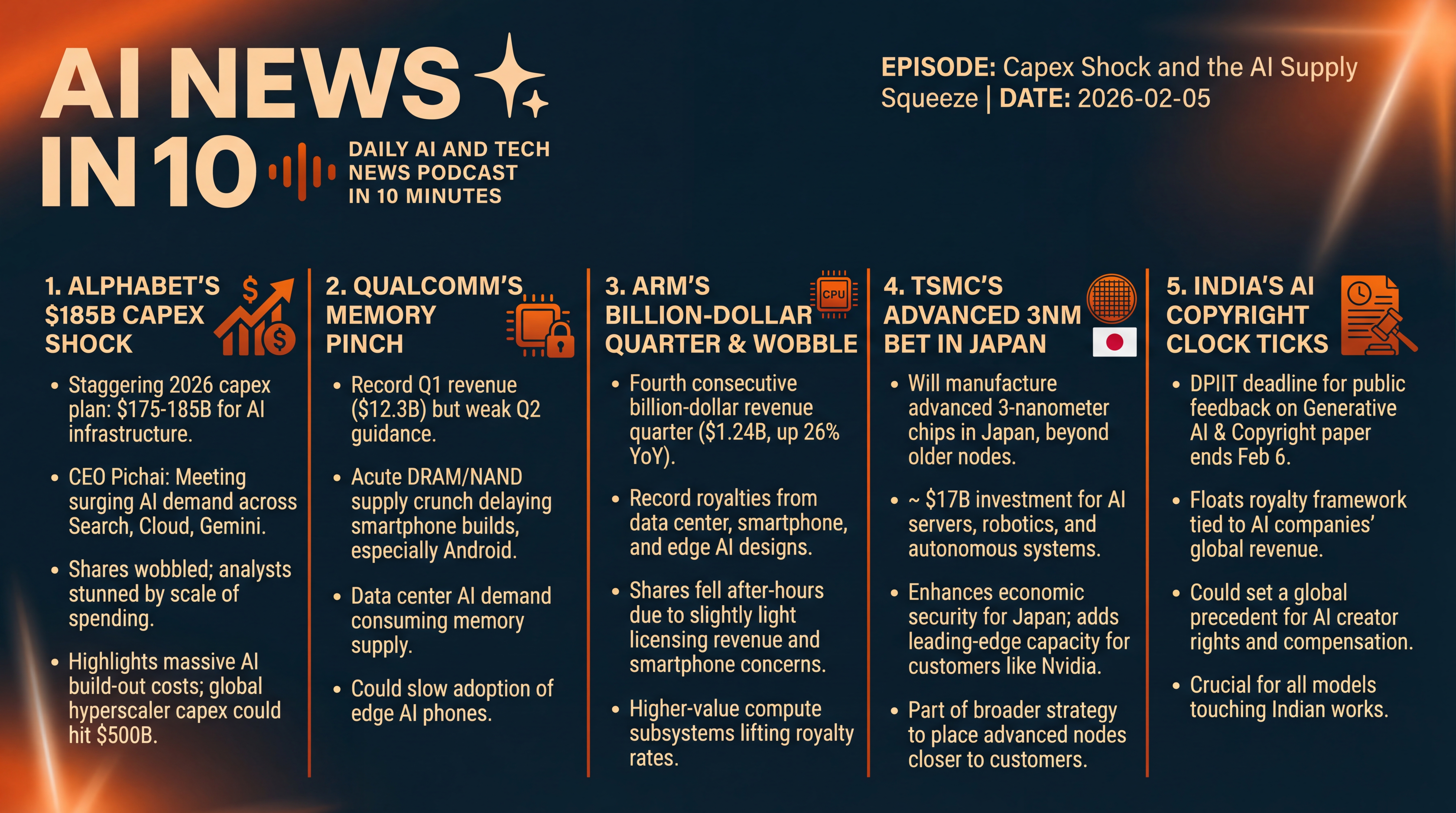

Alphabet’s massive 2026 spend, Qualcomm’s memory crunch, Arm’s royalty momentum, and TSMC’s 3-nanometer move in Japan — plus India’s looming AI copyright deadline. How capex, supply chains, and policy will shape the pace of AI in your phone and in the cloud.

Episode Infographic

Show Notes

Welcome to AI News in 10, your top AI and tech news podcast in about 10 minutes. AI tech is amazing and is changing the world fast, for example this entire podcast is curated and generated by AI using my and my kids cloned voices...

It’s Thursday, February 5, 2026, and today’s lineup is all about the cost — and consequences — of the AI build-out. We’ve got Alphabet stunning Wall Street with a 2026 capital-spending plan that dwarfs expectations, Qualcomm warning that a memory squeeze is chilling phone builds, and Arm notching another billion-dollar quarter even as a licensing wobble dents the stock. Then we’ll shift to supply chains: TSMC says it will make cutting-edge 3-nanometer chips in Japan... and we’ll close with a fast-approaching policy deadline in India that could reshape how AI models pay creators. Let’s get into it.

[BEGINNING_SPONSORS]

Alphabet first, because the numbers are staggering.

After a solid fourth quarter — 113.8 billion dollars in revenue, up 18 percent year over year, with Google Cloud accelerating 48 percent — Alphabet told investors it expects to spend between 175 and 185 billion dollars on capital expenditures in 2026. That’s data centers, chips, and the power and networking to feed them.

CEO Sundar Pichai framed it as meeting surging demand for AI products across Search, Cloud, and the Gemini ecosystem. Shares wobbled as investors digested the sheer scale — analysts had penciled in far less — but the company is leaning into AI infrastructure as a competitive moat. The capex guide was reiterated across filings and executive commentary tied to yesterday’s results.

To put it in context... market watchers estimate hyperscalers could collectively push toward half a trillion dollars in capex in 2026. Alphabet’s outlay alone is now the headline case for that thesis — and it’s already showing up in markets. Overnight and early-morning notes flagged chip and equipment names catching a bid even as broader tech struggled, while some Asia markets slid on worries about how much — and how fast — this AI spend needs to scale.

Story two: Qualcomm.

The chipmaker delivered record fiscal Q1 revenue — about 12.3 billion dollars — and beat on earnings, but guided below Wall Street for the current quarter, blaming an acute memory supply crunch that’s hobbling smartphone builds. Management pointed to tight DRAM and NAND availability pushing OEMs to delay or reshuffle production, especially in the Android mid-range.

The guide — 10.2 to 11.0 billion dollars in revenue and 2.45 to 2.65 in EPS — fell shy of consensus, and the stock dropped roughly 10 to 12 percent in after-hours trading. The subtext: edge AI phones are real, but you can’t ship without memory... and AI demand in data centers is consuming a lot of that supply.

Why this matters: over the past year, Qualcomm’s narrative has broadened beyond handsets to PCs, automotive, and even data-center inference — but phones still drive a big chunk of revenue. A prolonged memory bottleneck could dent the spring build cycle and the pace at which AI-enhanced phones reach mainstream price points. Watch for commentary from Android OEMs over the next few weeks on mix shifts and regional demand.

Story three: Arm’s momentum — and a speed bump.

Arm reported its fourth consecutive billion-dollar revenue quarter, posting 1.24 billion dollars for Q3 of its fiscal year, up 26 percent year over year, with record royalties driven by data center, smartphone, and edge AI designs. Royalty revenue hit about 737 million dollars; license and other revenue climbed to roughly 505 million.

Yet shares fell after hours as licensing came in a hair light versus lofty expectations — and because smartphone weakness signaled by other suppliers could pinch near-term results. The bigger picture remains clear: Arm’s architecture sits under nearly everything — from Nvidia’s Grace to AWS Graviton to new Arm-based CPUs at Google and Microsoft — and higher-value compute subsystems are lifting effective royalty rates.

For developers and systems builders, this translates into a steady migration: more high-performance Arm CPUs pairing with AI accelerators in data centers, while Arm-based designs at the edge pick up power-efficiency wins as AI features land on devices. If you’re modeling the ecosystem, Arm’s unit volumes plus rising per-unit royalty rates is the line to watch.

[MIDPOINT_SPONSORS]

Story four is a big supply-chain pivot with geopolitical overtones.

TSMC says it will manufacture advanced 3-nanometer semiconductors in Japan at its second Kumamoto fab — moving beyond its original plan for older nodes. The announcement came during CEO C. C. Wei’s meeting with Prime Minister Sanae Takaichi in Tokyo, and local reports peg the investment around 17 billion dollars as plans are finalized. The parts are aimed at AI servers, robotics, and autonomous systems — exactly where demand is hottest. For Japan, it’s an economic-security win; for TSMC, it’s another pressure valve as customers from Nvidia to Apple clamor for leading-edge capacity.

There’s a broader capacity story here. Even after ramping spending — TSMC guided up to 56 billion dollars for capex this year — analysts still see packaging and leading-edge wafers staying tight well into 2027, with Nvidia locking down a large share of CoWoS advanced-packaging slots. TSMC’s push to place advanced nodes and packaging closer to customers in Japan and the U.S. is about resilience, speed... and keeping the AI flywheel spinning.

And story five: a policy clock that runs out tomorrow.

India’s Department for Promotion of Industry and Internal Trade — DPIIT — extended the deadline for public feedback on its "Generative AI and Copyright — Part I" working paper to Friday, February 6. The paper floats a royalty framework tied to AI companies’ global revenue once models are commercialized, and hints at retroactive compensation for past training.

Global model makers, Indian startups, publishers, labels, and creator groups have been filing submissions — because any India-specific licensing regime could set a template other countries study, copy, or react against this year. If you operate or train models touching Indian works, today and tomorrow are the last chance to weigh in.

A few takeaways across these threads.

First, AI infrastructure isn’t just expensive — it’s lumpy. Alphabet’s capex surge and TSMC’s node-placement decisions ripple through currencies, commodities, power grids, and local politics.

Second, supply chains remain the governor on growth. Qualcomm’s memory warning — and the packaging bottlenecks around high-end accelerators — show how one missing piece holds up the whole product.

Third, the policy environment is moving... sometimes quickly. India’s consultation may foreshadow how emerging markets balance creator rights with AI development, even as the EU and U.K. continue to refine their own regimes.

That’s the beat for today: Alphabet’s eye-popping AI bill, Qualcomm’s memory-pinched guide, Arm’s billion-dollar cadence, TSMC’s 3-nanometer bet in Japan, and India’s clock ticking on AI copyright rules. We’ll be watching how markets digest the capex shock, how handset makers navigate memory, and whether industry players file last-minute comments in New Delhi... because all of it shapes how fast AI reaches you — on your phone, in the cloud, and everywhere in between.

Thanks for listening and a quick disclaimer, this podcast was generated and curated by AI using my and my kids' cloned voices, if you want to know how I do it or want to do something similar, reach out to me at emad at ai news in 10 dot com that's ai news in one zero dot com. See you all tomorrow.