Deepfakes Go Viral, Networks Get Smart

Hollywood reels from a viral deepfake as T-Mobile rolls out network-level live translation. Plus Tesla shifts FSD to subscriptions, Applied Materials rides the AI chip boom, and YouTube finally lands on Vision Pro.

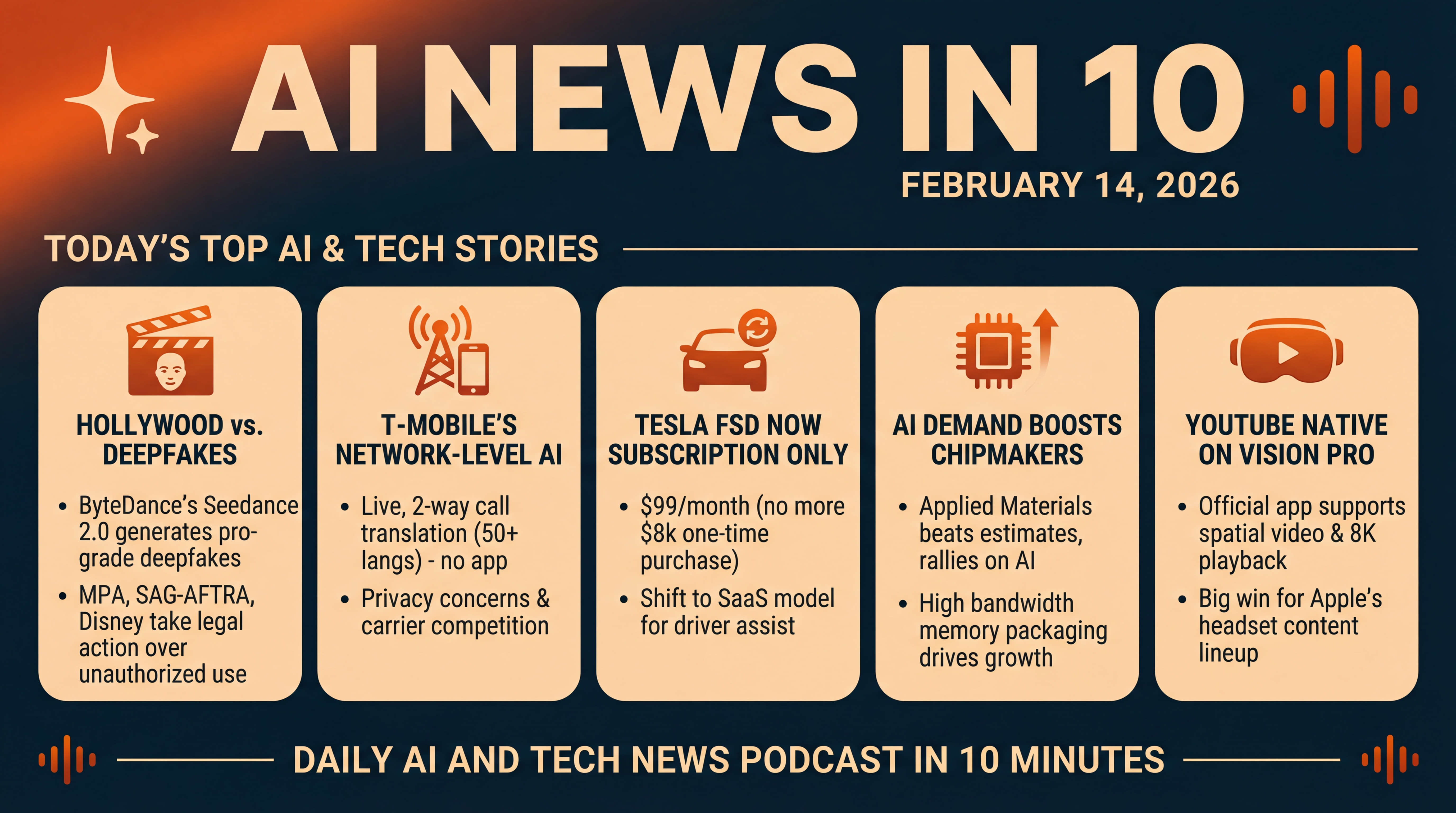

Episode Infographic

Show Notes

Welcome to AI News in 10, your top AI and tech news podcast in about 10 minutes. AI tech is amazing and is changing the world fast, for example this entire podcast is curated and generated by AI using my and my kids cloned voices...

It’s Saturday, February 14, 2026. Here’s what’s moving in AI and tech today...

Hollywood is up in arms over a hyper real deepfake made with ByteDance’s new video generator. T-Mobile just switched on network level AI that live translates your phone calls. Tesla’s Full Self-Driving is now subscription only. Applied Materials is rallying on AI demand—especially high bandwidth memory packaging. And two years after launch, YouTube finally has a native app on Apple’s Vision Pro with spatial video and even 8K on newer headsets. Let’s dive in.

[BEGINNING_SPONSORS]

First up... the deepfake that set off alarm bells in Hollywood.

A 15 second rooftop brawl between uncanny recreations of Tom Cruise and Brad Pitt—generated from a two line prompt—went viral this week. The clip was made with Seedance 2.0, a powerful new AI video model from ByteDance, and the reaction was immediate. The Motion Picture Association blasted the launch as unauthorized use of U.S. copyrighted works at massive scale, and SAG-AFTRA condemned the unconsented use of members’ likenesses and voices. Disney took the extraordinary step of sending a cease and desist, alleging ByteDance used Disney IP—from Marvel to Star Wars—in training and promotion. One screenwriter summed up the mood starkly: “It’s likely over for us.”

This is the clearest flashpoint yet between frontier generative video and long standing copyright and talent consent norms... and it’s happening in public, at internet speed. Coverage from outlets including Business Insider and The Wrap.

Two big angles emerge.

One—capability. Seedance 2.0 isn’t just text to video. It offers director style control over camera moves, lighting, and multi angle outputs... collapsing time and budget for what looks like pro grade footage.

Two—liability. Studios and unions argue that if models are trained or prompted in ways that reproduce protected characters and performances, that’s a rights violation—period. Expect this to fast track new legal tests, watermarking requirements, and possibly model specific licensing schemes as studios try to claw back control while the tech keeps leaping forward. The debate is already lighting up Reddit and industry forums.

Story two... T-Mobile is pushing AI out of apps and into the network.

The carrier announced Live Translation—a real time, two way call translator for more than 50 languages that runs at the network level. No special phone required, no apps to install. If you’re on T-Mobile, you start a normal call and activate it by dialing star eight seven star... and the system auto detects the two languages and translates back and forth as you speak. The free beta opens to eligible postpaid members, with a broader launch expected later this year. T-Mobile says it doesn’t store recordings or transcripts during the beta, and executives emphasized that the service works over VoLTE, VoNR, and Wi‑Fi calling—so it’s not just a 5G showpiece. It’s a notable shift: AI as a carrier feature, not just an app feature.

Why it matters: building translation into the network lowers friction dramatically—grandma with a flip phone can now talk to a shopkeeper across the world... and be understood. It also sets up a competitive race among carriers to embed more agentic network services, from translation to real time spam screening to call summarization. The privacy piece will be key—users will want clear, verifiable limits on data retention, and regulators will likely ask how these AI voice features intersect with wiretap and consent laws across states and countries. Reported by T-Mobile and The Verge.

Story three... a quick but important one—today is the last day Tesla owners could buy Full Self-Driving outright.

As of February 14, Tesla is removing the eight thousand dollar one time purchase and moving FSD to subscription only at ninety nine dollars a month in the U.S. Tesla pitches this as making FSD more accessible with a lower entry price... but it also cements a software as a service model for one of the most controversial driver assist features on the market. Remember, FSD remains a supervised Level 2 system—drivers must stay attentive and in control. Tesla has been emailing owners with a final chance push, and some fine print around transferability prompted fresh scrutiny from watchdogs and the EV press. The strategic subtext: recurring revenue, faster upgrade cycles, and pressure to expand the FSD subscriber base. Reported by Engadget.

[MIDPOINT_SPONSORS]

Story four... Applied Materials rode the AI wave this week, beating estimates and guiding with confidence as demand for AI compute ripples through the chipmaking supply chain.

For the quarter ended January 25, revenue came in at seven point zero one billion dollars, with non GAAP EPS of two dollars and thirty eight cents. CEO Gary Dickerson highlighted surging investment in leading edge logic, high bandwidth memory, and advanced packaging—areas where Applied is a process equipment leader—and said the company expects its semiconductor equipment business to grow more than twenty percent in calendar 2026. Investors liked what they heard: shares jumped double digits as analysts pointed to a multiyear build out of 3D packaging capacity to feed HBM demand for AI accelerators. Coverage via GlobeNewswire and Barron’s.

A quick decode: as model sizes balloon and memory bandwidth becomes the bottleneck, AI performance is increasingly tied to how fast—and how efficiently—you can move data in and out of HBM stacks. That puts tools for through silicon vias, hybrid bonding, and fan out packaging into high gear. Applied’s print and outlook suggest that, beyond headline GPU shortages, the AI cycle is broadening across the equipment ecosystem.

And wrapping with a consumer angle... YouTube has finally launched a native app for Apple’s Vision Pro.

Until now, Vision Pro users were stuck with the web version or third party workarounds that were later pulled. The official app supports the full catalog—standard videos, Shorts, plus 180, 360, and VR180 spatial formats—and on the newer M five based Vision Pro, it even supports up to 8K playback. It hooks into your subscriptions, playlists, and watch history, with spatial environments for that theater like feel. It took two years, but this closes a glaring gap in Vision Pro’s content lineup, and signals Google is willing to support visionOS even as it nurtures Android XR. Some reviewers note it’s more baseline than groundbreaking at launch—but it’s a big win for owners who’ve been waiting. Reported by MacRumors.

Stepping back... today’s stories sketch a clear theme.

Generative tools are crossing a threshold—powerful enough to wow, disruptive enough to trigger lawsuits. Networks are getting smarter—turning AI into an ambient utility. Automakers are locking cornerstone features behind subscriptions. And the AI build out continues under the hood—from HBM to advanced packaging—even as consumer platforms like Vision Pro slowly fill in the app gaps.

We’ll keep tracking the policy fights, the infrastructure race, and the everyday utilities that make AI feel... well... normal.

Thanks for listening and a quick disclaimer, this podcast was generated and curated by AI using my and my kids' cloned voices, if you want to know how I do it or want to do something similar, reach out to me at emad at ai news in 10 dot com that's ai news in one zero dot com. See you all tomorrow.