Galaxy S26 AI, Intrinsic Returns, and $600B Compute

We break down Samsung’s agentic Galaxy S26, Google bringing Intrinsic back in-house, a bipartisan U.S. AI standards push, OpenAI’s recalibrated $600B compute plan, and the UK’s new inference test lab. Quick context and why it matters — in ten.

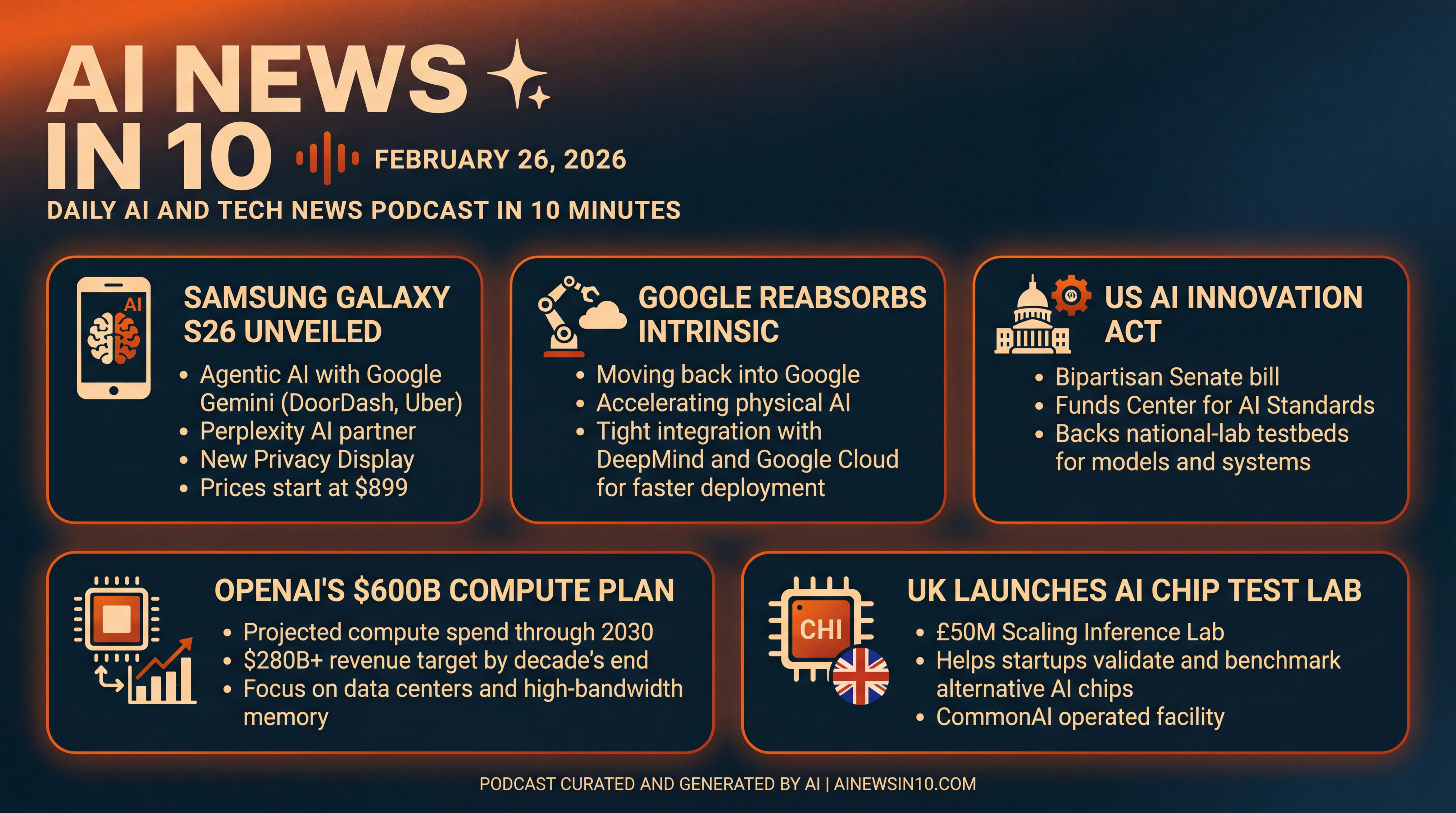

Episode Infographic

Show Notes

Welcome to AI News in 10, your top AI and tech news podcast in about 10 minutes. AI tech is amazing and is changing the world fast, for example this entire podcast is curated and generated by AI using my and my kids cloned voices...

Thursday, February 26, 2026.

Today’s AI News in 10 is stacked — Samsung officially unveils the Galaxy S26 line with on-device and cloud AI, plus a new Privacy Display and a surprising partner alongside Google’s Gemini... Google pulls its robotics moonshot, Intrinsic, back into the mothership to push physical AI... a bipartisan Senate bill returns to boost U.S. AI testbeds and standards... OpenAI recalibrates its mega-scale compute ambitions and shares fresh revenue figures... and the UK sets up a national test lab to help startups validate AI chips.

Let’s dive in.

[BEGINNING_SPONSORS]

First up — Samsung’s Galaxy S26 goes all in on agentic AI.

Samsung has taken the wraps off the Galaxy S26 family, landing March 11.

Starting prices — $899 for S26, $1,099 for S26 Plus, and $1,299 for S26 Ultra.

The headline isn’t just bigger batteries or camera tweaks — it’s the AI. Google’s Gemini is acting more like a mobile agent: it can read a group chat, assemble a food order in DoorDash, or book you an Uber... then pause for your approval before it completes the task. That human-in-the-loop moment is key for trust and liability, and it rolls out first in the U.S. and South Korea.

Samsung is also adding a third assistant by partnering with Perplexity — shipping alongside Bixby and Gemini — so you actually get a choice of AI copilots out of the box.

Some features run on-device, others tap the cloud. And the Ultra adds a Privacy Display that narrows viewing angles so only the person directly in front can see the screen — think of it as a shoulder-surfing shield built into the glass.

Analysts say the emphasis is on practical, everyday wins — summoning rides, composing orders, editing photos — to move AI from flashy demo to daily habit.

Sources: The Associated Press, Wired, and the Wall Street Journal.

Next — Google reabsorbs Intrinsic to accelerate physical AI.

Alphabet’s robotics software venture Intrinsic — the team that pitched an Android-for-robots layer — is moving back into Google as a distinct group working closely with Google DeepMind, leaning on Gemini models and Google Cloud.

What’s the strategy? As robotics races from research to deployment, Google wants the software stack, the models, and the cloud under one roof — to ship faster.

Intrinsic had already acquired Vicarious and pieces of Open Robotics, released its Flowstate developer platform, and integrated NVIDIA’s Isaac tools for grasping and manipulation. Now those efforts tighten up with DeepMind’s work on robot task execution.

The bet: tightly integrated AI plus developer tooling could finally make robot programming approachable beyond the largest factories.

Sources: TechCrunch and The Verge.

Now — a bipartisan push to boost U.S. AI standards and testbeds.

On Capitol Hill, Senators Todd Young and Maria Cantwell are reintroducing the Future of AI Innovation Act. The bill would authorize a Center for AI Standards and Innovation to coordinate voluntary benchmarks and transparency guidelines with industry and academia — and back national-lab testbeds for AI and adjacent fields like robotics and quantum.

This is the plumbing companies have been asking for — places to validate models and systems against common metrics, without dictating a single architecture. It signals Congress wants to complement, not only regulate — by funding shared infrastructure that helps startups prove capability and safety.

Source: Axios.

[MIDPOINT_SPONSORS]

OpenAI trims its long-range compute spend — still colossal at around $600 billion through 2030.

OpenAI is telling investors it now targets roughly $600 billion in total compute spending through 2030 — down from a previously floated $1.4 trillion — while projecting more than $280 billion in revenue by the end of the decade.

Nearer term, OpenAI’s 2025 numbers came in around $13 billion in revenue and $8 billion in costs, both slightly better than prior targets, according to reporting.

What does this mean? Even the reduced plan implies an unprecedented build-out of data centers, networking, and especially high-bandwidth memory — for both training and inference. The economics are maturing too — a clearer split between consumer and enterprise revenue, and growing attention to inference costs and margins. And signals like this drive vendor roadmaps across chips, optical interconnects, and power — expect hyperscalers and utilities to keep talking about 24/7 clean power and grid interconnection queues.

Source: Reuters via Yahoo Finance.

Finally — the UK launches a national AI chip test lab to help startups compete.

The UK’s Advanced Research and Invention Agency — ARIA — is putting £50 million behind a new Scaling Inference Lab in Cambridge, to be operated by the nonprofit CommonAI. The goal: give startups a neutral, real-world facility to validate and benchmark alternative AI chips and systems — not for model training, but for inference at scale — so they can prove compatibility, performance, and efficiency to customers who default to the big three silicon vendors.

The lab will run in six-month cycles and aims to cut time-to-proof for new hardware, with an initial £16 million grant to stand up the site and support early cohorts. Program leaders argue this kind of shared testbed can de-risk buyers’ pilots and help challengers cross the credibility gap more quickly. Supporters even float the UK capturing a slice of what they see as a trillion-dollar AI chip market over the next decade.

Sources: The Times, and press details from ARIA and CommonAI.

Quick recap...

Samsung’s S26 bets big on practical, agentic AI with Gemini and Perplexity. Google folds Intrinsic back in to speed up physical AI. The Future of AI Innovation Act would fund standards and testbeds. OpenAI tightens its compute plan — but $600 billion still means mega-infrastructure. And the UK builds a national lab so startups can prove their AI chips can hang with incumbents.

That’s your AI News in 10 for Thursday, February 26, 2026.

Thanks for listening and a quick disclaimer, this podcast was generated and curated by AI using my and my kids' cloned voices, if you want to know how I do it or want to do something similar, reach out to me at emad at ai news in 10 dot com that's ai news in one zero dot com. See you all tomorrow.