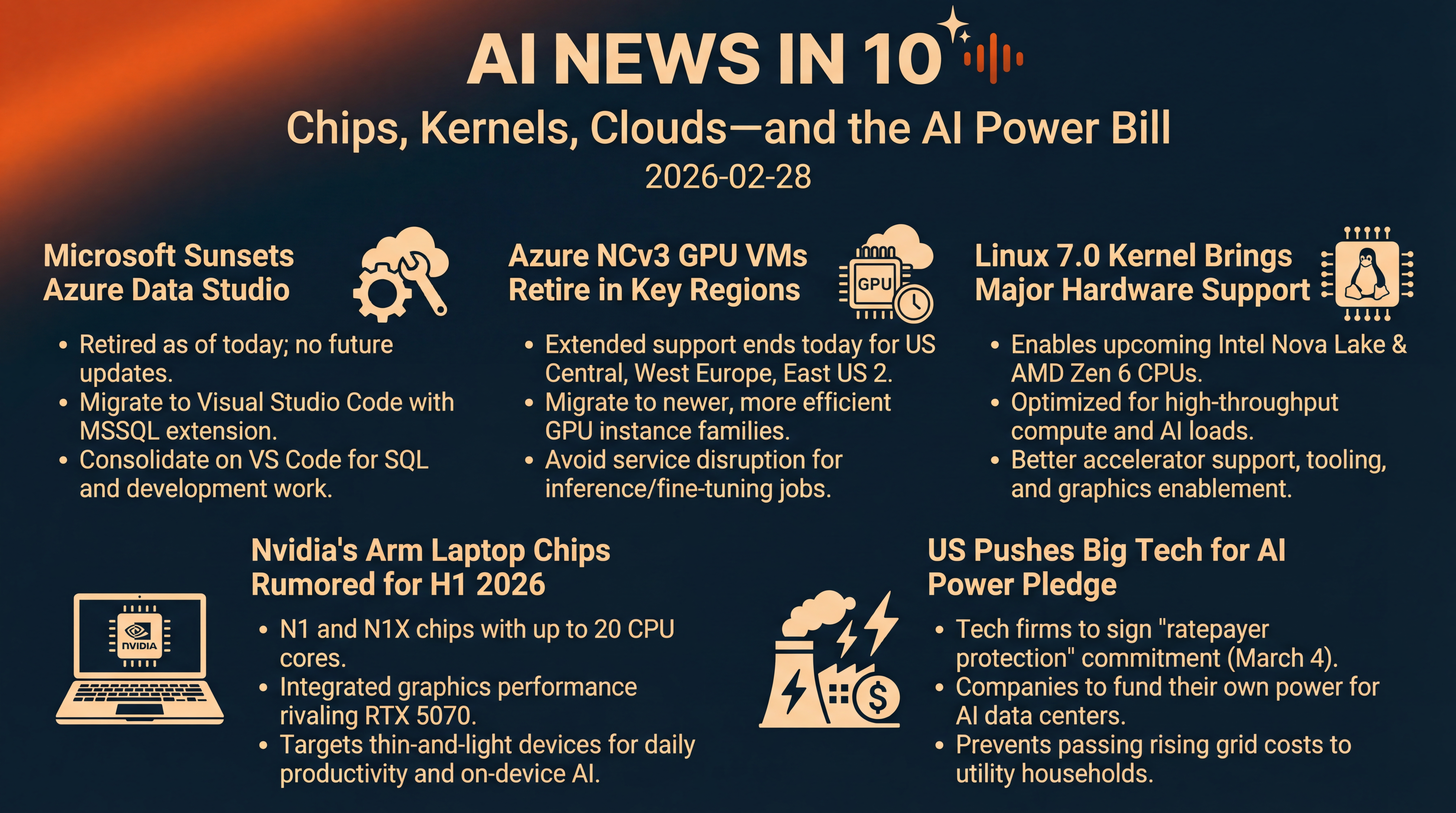

Chips, Kernels, Clouds—and the AI Power Bill

A whirlwind of AI infrastructure moves: Azure Data Studio retires, Azure’s NCv3 VMs sunset, Linux 7.0 readies big-hardware support, Nvidia’s Arm laptop rumors sharpen, and Washington readies a ‘ratepayer protection’ pledge for data center power. Get the essential takeaways and what they mean for your roadmaps.

Episode Infographic

Show Notes

Welcome to AI News in 10, your top AI and tech news podcast in about 10 minutes. AI tech is amazing and is changing the world fast, for example this entire podcast is curated and generated by AI using my and my kids cloned voices...

It's Saturday, February 28, 2026, and it's been a surprisingly busy weekend for the nuts and bolts of AI — the tools you use, the GPUs you rent, the kernel under your servers, the chips that could power your next laptop... and even the electricity feeding tomorrow's AI factories.

Here's what's moving today. Microsoft is officially sunsetting a popular data tool and nudging everyone to Visual Studio Code. Azure's older GPU VMs hit regional retirement deadlines. Linux 7.0 drums up major new hardware support. Nvidia's long-rumored Arm laptop chips look closer than ever. And Washington's push for Big Tech to pay for their own AI power inches toward signatures next week.

[BEGINNING_SPONSORS]

Story one — A quiet but consequential cutoff. Microsoft retires Azure Data Studio today.

If you manage data pipelines, tune queries for your RAG stack, or live in SQL all day, mark this date. As of today, Microsoft has officially retired Azure Data Studio. The guidance is simple — move to Visual Studio Code with the MSSQL extension, Microsoft's unified, actively developed home for SQL work going forward.

Practically, your projects open in VS Code, your scripts keep working, and you pick up modern editor perks like IntelliSense, integrated Git, and smoother continuous integration and delivery hooks. But here's the headline — Azure Data Studio will not receive updates or security fixes after today, so teams need to plan... and finish... their transition, especially where data engineering and analytics sit right next to model training and inference. Fewer tools to maintain, one editor that scales across roles. Source — Microsoft Learn.

If you still rely on Azure Data Studio add-ons — like the SQL Migration extension — note those deprecations were synchronized with today's end of support. Microsoft has published transition guides and alternatives. The message is clear: consolidate on VS Code with the MSSQL extension for day-to-day work. Source — Microsoft Community Hub and Microsoft Learn.

Story two — Azure's NCv3 GPU VMs hit the deadline in several regions.

Also landing today — a lifecycle milestone for some of Azure's older GPU virtual machines. The NCv3 series, once a workhorse for CUDA workloads, reached general retirement last year, but certain regions received extensions. For US Central, West Europe, and East US 2, that extended run ends today.

If you still have inference or fine-tuning jobs tied to NCv3 in those regions, migrate to newer families to avoid disruption. The impact isn't just housekeeping — it's a push toward more efficient instances with better performance per watt and newer drivers, which matters as AI budgets meet power and latency constraints. Source — Microsoft Learn retirement bulletin.

Two quick takeaways. First, migration is a chance to right-size — many NCv3 footprints grew organically during last year's AI rush. Second, moving up a generation often unlocks newer CUDA, driver, and networking combinations that lift throughput on the same workload... which can offset part of the migration cost if you plan it well. Source — Microsoft Learn.

Story three — Linux 7.0 momentum — big-hardware enablement for the AI era.

On the systems side, the Linux kernel train is rolling toward a major version jump. The 7.0 cycle is moving through release candidates that fold in enablement for upcoming CPU families — think Intel's Nova Lake and AMD's Zen 6 — plus a raft of drivers and performance counters that matter for high-throughput compute. Expect early adoption in distros like Ubuntu 26.04 LTS and Fedora 44 first, with broader rollouts following the usual cadence.

Under the hood, we're seeing improvements to accelerator support, expanded tooling — like L2 cache stats in Turbostat — and graphics enablement that dovetails with future integrated GPUs. These are the pieces that add up when you're shuffling batches between CPU, GPU, and memory under real production loads. Reporting — Tom's Hardware.

Why it matters — kernel-level enablement is the unglamorous prerequisite for stable AI performance. If you're planning late-2026 hardware refreshes, these changes are what let frameworks and drivers actually exploit next-gen cache hierarchies and power states. It's the difference between "it boots" and "it sustains throughput for 12 hours straight without thermal throttling." Early features and the naming shift were also flagged as Linux 6.19 wrapped, setting up the 7.0 branding and expectations. Source — The Verge.

[MIDPOINT_SPONSORS]

Story four — Nvidia's Arm laptop play looks imminent. N1 and N1X rumors sharpen.

The not-so-quiet rumor mill around Nvidia's return to consumer SoCs keeps tightening its timelines. Fresh reporting points to Arm-based N1 and N1X laptop chips arriving in the first half of 2026, with early design wins expected at Dell and Lenovo. The headline claim — up to 20 CPU cores across two clusters, and integrated graphics performance on par with an RTX 5070. Ambitious targets for thin-and-light devices aimed at daily productivity, media, and on-device AI. Coverage — Tom's Hardware.

If those numbers land anywhere near spec, Windows on Arm could see its most credible third-party push yet — with Nvidia challenging Apple's M-series playbook from another angle. Strategically, this would give Nvidia three bites at the AI apple: data-center GPUs for training and inference, edge and embedded platforms like Jetson, and Arm-based consumer CPUs with strong integrated GPUs for on-device generative tasks. That stacked approach could make 2026 the year we see widespread, always-on local models for summarization, translation, and image generation living right alongside cloud copilots... assuming battery life and thermals pencil out. Source — Tom's Hardware.

Story five — The energy bill for AI. US "ratepayer protection" pledge targets March 4 signings.

On the policy front, this week's State of the Union remarks included a pledge: major tech firms will sign a "ratepayer protection" commitment next week — March 4 — to fund their own power for AI data centers rather than pass rising grid costs to households. The idea is to push hyperscalers to underwrite generation capacity — from long-term nuclear power purchase agreements to next-generation thermal and accelerated permitting — so utility customers aren't on the hook as AI power demand explodes. Outlets report that Amazon, Google, Meta, Microsoft, xAI, Oracle, and OpenAI are among those expected to sign. Reporting — The Verge.

If it materializes as described, the pledge could reshape siting and financing. Expect more direct procurement, co-located generation, and... scrutiny — because guarantees are only as good as the contracts and enforcement behind them. Data centers have already run into friction over water, noise, taxes, and local hiring. Energy self-provisioning may ease one pressure point while inviting fresh oversight on where and how new capacity gets built. Source — The Verge.

Quick recap.

Azure Data Studio bows out today — if you haven't moved to VS Code and the MSSQL extension, that's now a must.

Azure's NCv3 GPU VMs age out in more regions, nudging AI workloads to newer instances.

Linux 7.0's momentum means your 2026 hardware plans can lean into better enablement and stability.

Nvidia's rumored Arm laptop chips could make on-device AI far more capable this year.

And in Washington, a power-funding pledge for AI data centers could redefine how — and where — the next wave of compute gets built.

See you tomorrow.

Thanks for listening and a quick disclaimer, this podcast was generated and curated by AI using my and my kids' cloned voices, if you want to know how I do it or want to do something similar, reach out to me at emad at ai news in 10 dot com that's ai news in one zero dot com. See you all tomorrow.