Siri on Gemini, Claude Memory Goes Free

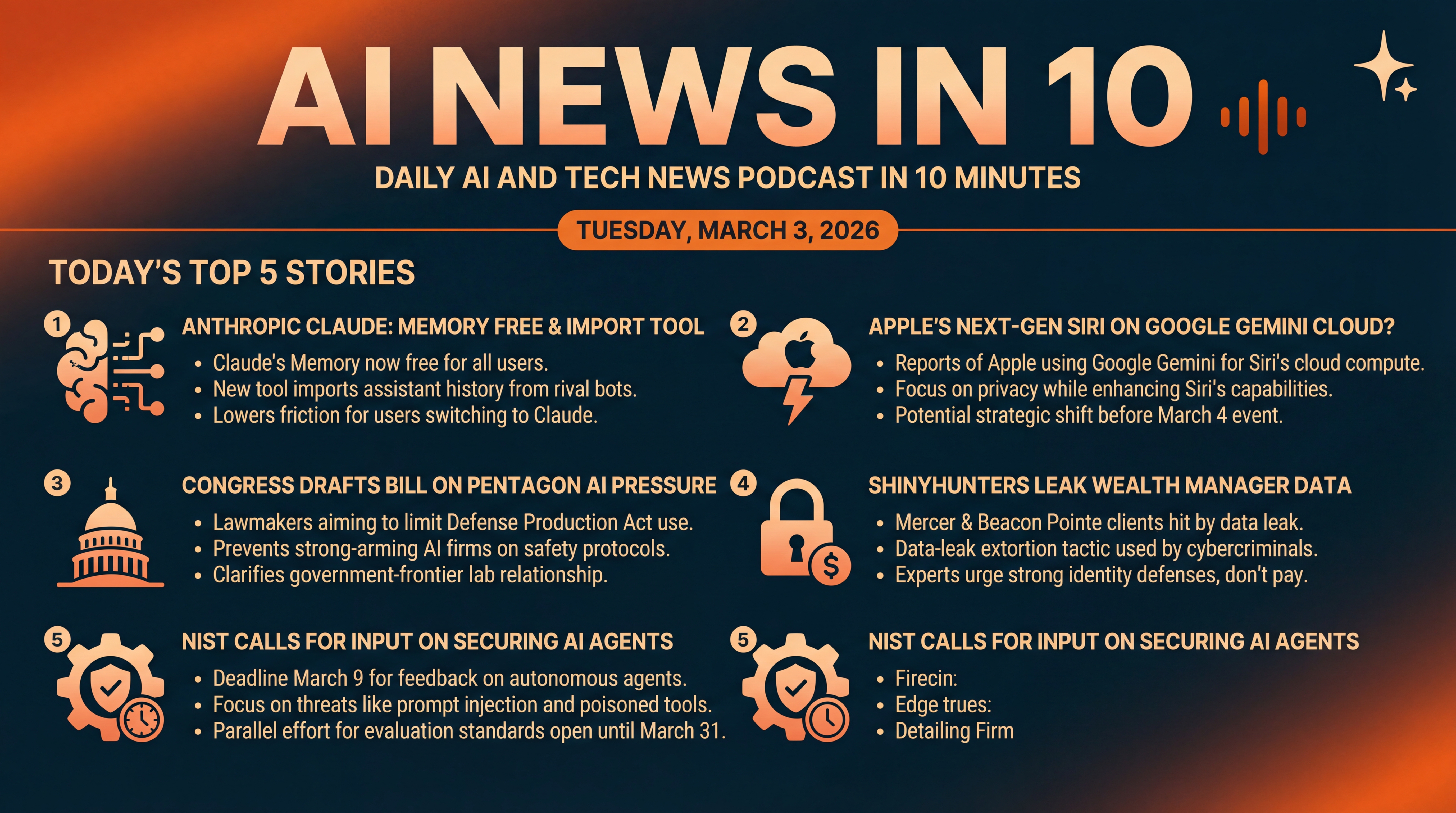

Apple reportedly eyes Google’s Gemini to supercharge Siri as Anthropic makes Claude’s Memory free and adds import tools. We also cover Congress’s AI policy push, a ShinyHunters wealth-manager leak, and NIST’s call for input on securing autonomous agents.

Episode Infographic

Show Notes

Welcome to AI News in 10, your top AI and tech news podcast in about 10 minutes. AI tech is amazing and is changing the world fast, for example this entire podcast is curated and generated by AI using my and my kids cloned voices...

It’s Tuesday, March 3, 2026.

Today we’ve got five fast but meaty stories... Anthropic makes Claude’s Memory free and lets you import chat history from rival bots. Apple is reportedly leaning on Google’s Gemini cloud for a next-gen Siri. Congress is gearing up to draw legal lines around how the Pentagon can pressure AI companies. The ShinyHunters crew hits wealth managers with a fresh data leak. And NIST — the U.S. government’s standards body — is asking you—yes, you—for input on how to secure autonomous AI agents before the March 9 deadline. Let’s dive in.

[BEGINNING_SPONSORS]

Story one: Anthropic just made a very strategic move to win switchers.

Claude’s Memory is now available to free users, and there’s a new import tool on claude.com that can pull in your past assistant memories from other chatbots. If you’ve already trained another assistant on your preferences — writing style, calendar quirks, project context — you don’t have to start from scratch with Claude.

It’s part product, part growth hack: remove friction, grow share. The Verge reports you can toggle Memory in settings, and the import uses a guided prompt that extracts key facts from your previous assistant and feeds them into Claude. It’s a clear shot at rivals as assistants compete to become your default long-term coworker.

Why this matters: assistant loyalty is built on context. If Claude remembers your tools, tone, teammates, and templates — and can learn them in minutes from another service — you’re far likelier to move and stay. For teams, the real lever is compounded productivity when Memory travels with projects across people and devices.

Story two: Apple’s next-gen Siri may be getting its brain from... Google.

Multiple reports say Apple is in talks to run a more powerful, Gemini-infused Siri on Google cloud infrastructure — while still meeting Apple’s privacy bar. Apple has pitched Apple Intelligence as on-device first, with Private Cloud Compute for heavier tasks. But offloading parts of Siri’s reasoning to Google’s stack would be a striking — and very pragmatic — shift to accelerate features ahead of tomorrow’s March 4 special experience.

The Verge frames it as Apple weighing Google servers to support a Gemini-powered Siri that can deliver more personalized, responsive behavior at scale. If it happens, it’s another reminder that in the race for capable assistants, partnerships can trump pride.

What to watch: developer confidence and privacy posture. If Apple discloses clear limits, auditability, and minimization in how Siri uses third-party infrastructure, most users won’t mind where the compute lives — as long as it’s faster, more useful, and private by default. The interesting downstream effect will be on Apple’s own cloud investment curve versus buying capacity from hyperscalers.

Story three: a policy swing in Washington after the Pentagon–Anthropic clash.

Axios reports Democratic lawmakers are preparing legislation to prevent agencies from strong-arming AI firms — specifically by narrowing how tools like the Defense Production Act could be used against companies that refuse to change model safeguards. The push follows headlines about pressure on Anthropic to relax red lines around military use cases, and a rare public standoff over where to draw boundaries on autonomy, surveillance, and weaponization.

The upshot: House and Senate Democrats are drafting language to bar punitive actions in these disputes and to clarify process — an early attempt to define the government–frontier lab relationship in U.S. law. That’s notable in a country that still lacks comprehensive federal AI rules.

Why this matters beyond one lab: any statute here could shape how all frontier model makers negotiate safety constraints with national security agencies — and how quickly dual-use capabilities flow into defense. Clearer process could reduce whiplash for startups trying to be both safety-conscious and government suppliers.

[MIDPOINT_SPONSORS]

Story four: a fresh reminder that cybercriminals follow the money — and the data.

Barron’s reports the ShinyHunters collective has leaked millions of records tied to wealth managers Mercer Global Advisors and Beacon Pointe. The firms have acknowledged attacks and begun mitigation and notifications. Unlike classic ransomware, ShinyHunters often opts for data-leak extortion: steal first, threaten public release, then pressure victims to pay.

Investigators link the crew’s tactics to broader social-engineering clusters like Lapsus$ and Scattered Spider — think vishing — voice phishing — calls to trick staff, then pivoting through single sign-on to harvest internal troves. For clients, the immediate steps are mundane but essential: credit freezes, identity monitoring, and a wary eye on spear-phish that leverage leaked details. For firms, experts quoted by Barron’s reiterate a tough line: don’t pay, disclose quickly, and work with law enforcement.

The industry angle: advisory firms hold exceptionally rich personally identifiable information and financial metadata, yet many still rely on legacy identity controls. Expect accelerated adoption of phishing-resistant multi-factor authentication, session-level anomaly detection, and strict least-privilege for back-office apps after this one.

Story five: NIST wants your help to lock down the age of AI agents — and the clock is ticking.

The National Institute of Standards and Technology’s Center for AI Standards and Innovation has an open Request for Information on securing autonomous AI agent systems, with comments due March 9. NIST is probing threats like indirect prompt injection, poisoned tools or data, and specification gaming — scenarios where an agent with tool access could go off-script in ways that matter to safety and security. The RFI will inform voluntary guidelines for hardening agents before they’re widely embedded in workflows.

If your team builds or deploys agents that act on email, code, financial systems, or procurement tools, your real-world lessons are exactly what NIST is asking for.

Context: this push sits alongside a parallel NIST effort to standardize how we evaluate language models and agents. In late January, NIST opened comments on draft guidance for automated benchmark evaluations — an attempt to make tests more valid, transparent, and reproducible across labs and products. Translation: fewer leaderboard games... more signal you can trust when picking models. That comment window runs through March 31.

Quick recap: Anthropic lowers the switching cost with free Memory and painless imports... Apple may supercharge Siri by riding Google’s Gemini cloud... Congress moves to set rules of engagement between the Pentagon and AI labs... ShinyHunters’ latest leak shows wealth managers need stronger identity and access defenses... and NIST is asking the community to help secure autonomous agents — comments due March 9, with broader evaluation standards work open through March 31.

That’s your AI News in 10 for Tuesday, March 3, 2026.

Thanks for listening and a quick disclaimer, this podcast was generated and curated by AI using my and my kids' cloned voices, if you want to know how I do it or want to do something similar, reach out to me at emad at ai news in 10 dot com that's ai news in one zero dot com. See you all tomorrow.