Native Gemini, Agent Governance, and Model Shakeups

Gemini goes native on Mac, AMD and Samsung double down on AI memory, Microsoft packages Copilot and Agent 365, Meta buys Moltbook, and OpenAI rotates models. How these shifts bring AI closer to real work.

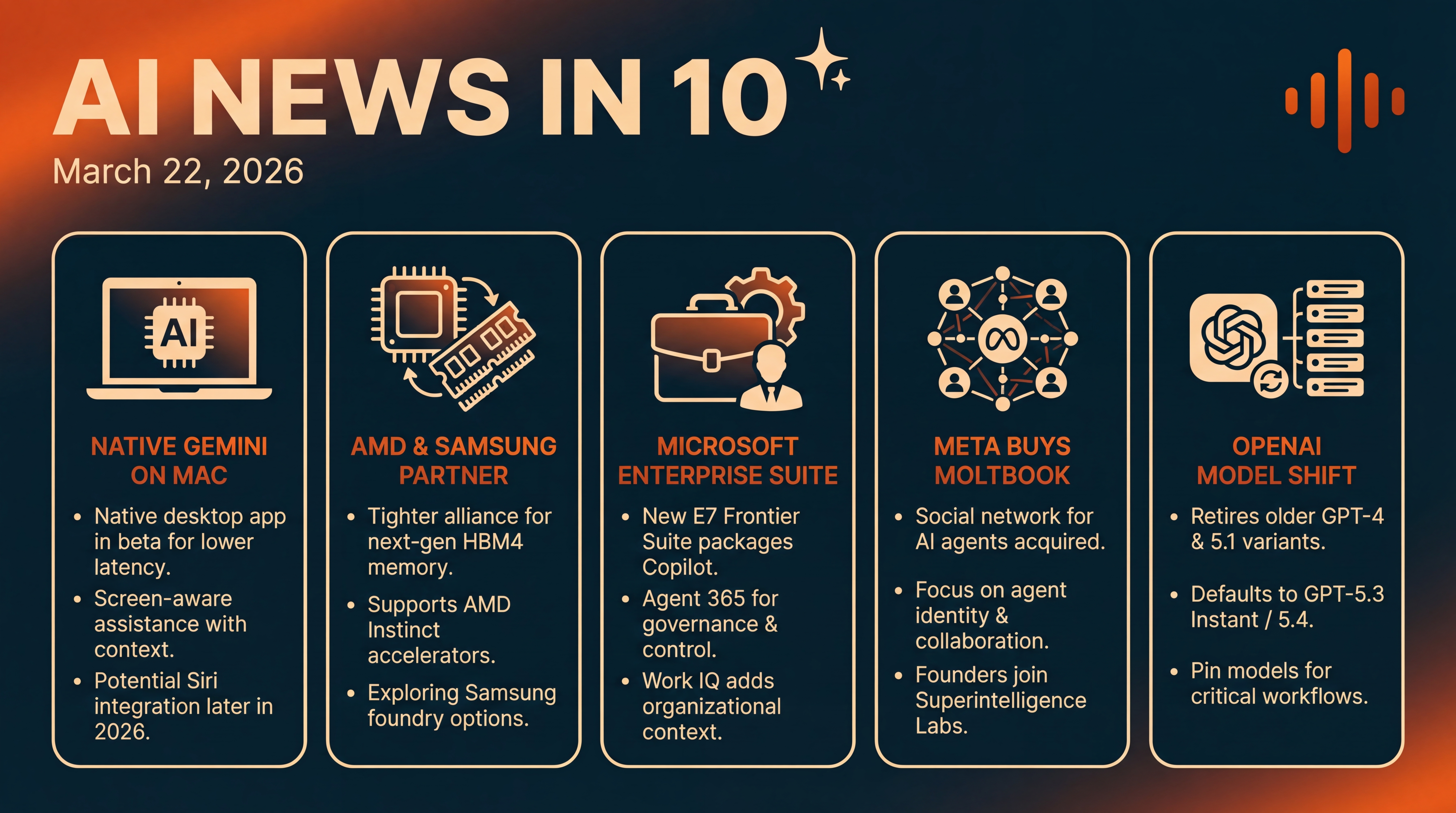

Episode Infographic

Show Notes

Welcome to AI News in 10, your top AI and tech news podcast in about 10 minutes. AI tech is amazing and is changing the world fast, for example this entire podcast is curated and generated by AI using my and my kids cloned voices...

It’s Sunday, March 22, 2026 — here’s what’s new across AI and tech.

We’ve got five stories today: Google is testing a Gemini desktop app for Mac, AMD and Samsung are linking up on next‑gen AI memory, Microsoft is packaging Copilot and agent governance into a new enterprise suite, Meta bought a social network for AI agents, and OpenAI just retired a slate of older ChatGPT models. If your workflows feel different this week... that may be why.

Let’s dive in.

[BEGINNING_SPONSORS]

Story one: Google’s Gemini is stepping out of the browser and onto the Mac desktop.

Multiple reports say a native Gemini app for macOS is in beta, aiming for a more capable, lower‑latency experience — think screen‑aware assistance that can see what’s on your desktop and help you work across apps without the tab‑hopping. T3 says the beta is limited for now but includes early features like screen sharing, so Gemini can pull on‑screen context directly into its responses.

This also lines up with chatter that Apple’s next‑generation Siri will be powered, at least in part, by Gemini later this year — so we may see both companies inch toward similar, deeply integrated desktop assistants. No public release date yet, but the signal is clear: the AI assistant race is moving beyond the web and into native apps that can safely tap your device context. That could be a big usability unlock... and the start of a fresh privacy conversation.

Story two: Chips and memory — and a notable pairing.

AMD and Samsung announced a tighter partnership around high‑bandwidth memory for AMD’s Instinct accelerators, and hinted at exploring Samsung as a potential foundry for future AMD parts. Why it matters: capacity. Samsung says its HBM4, built on a 1C DRAM process with a 4‑nanometer logic base die, will deliver up to 13 gigabits per second per pin and as much as 3.3 terabytes per second of bandwidth — headroom AMD wants for the MI455X GPUs and its Helios rack‑scale AI architecture due later this year.

The release also nods to continued shortages in memory and storage, pushing vendors to lock in supply and co‑optimize stacks from silicon to system to rack. Bottom line — more vertical alignment to keep AI training and inference pipelines fed, and a bit more foundry optionality for AMD in a world still dominated by TSMC. Reported by ITPro.

Story three: Microsoft is trying to productize the agent era for the enterprise.

Earlier this month it introduced the Microsoft 365 E7 Frontier Suite, wrapping E5, Copilot, and the new Agent 365 into one offer priced at 99 dollars per user per month. Agent 365 — Microsoft’s governance and control plane for long‑running, tool‑using AI agents — gets a general availability date of May 1, at 15 dollars per user.

Microsoft also highlighted Work IQ, an organizational knowledge layer meant to give Copilot and agents richer context from your company’s corpus — while keeping an eye on control and compliance. The company says paid Copilot seats grew more than 160 percent year over year, daily active usage is up ten times, and the number of customers deploying Copilot at scale — 35,000 seats or more — has tripled.

If your CIO is pushing harder on agent pilots this quarter, this packaging is why — it lowers procurement friction while promising a way to observe, secure, and standardize what agents actually do inside the business. Details are straight from Microsoft’s announcement.

Quick breather... why these first three stories matter together: they point to the same shift — AI experiences getting closer to your real work. Native desktop assistants that can see your screen, memory stacks purpose‑built to keep models from stalling, and enterprise suites that turn AI trials into software you can buy, govern, and scale. Different layers, same direction.

[MIDPOINT_SPONSORS]

Story four: Meta just bought Moltbook, a small but buzzy social network designed not for people — but for AI agents.

Axios reports that Moltbook’s founders, Matt Schlicht and Ben Parr, are joining Meta’s Superintelligence Labs, which is led by former Scale AI CEO Alexandr Wang. The pitch: Moltbook verified and tethered agents to their human owners, letting agents identify themselves, network with other agents, and coordinate tasks in an authenticated space.

In an internal note seen by Axios, Meta suggested existing Moltbook customers can keep using the platform for now — but framed that as temporary. If you’re tracking agent ecosystems, this is interesting: Meta’s move hints at a world where agents need identity, reputation, and policy guardrails to collaborate safely across services — something beyond ad‑hoc webhooks and private sandboxes. And it gives Meta talent and primitives to build that layer. Reported by Axios.

Story five: OpenAI quietly changed the model lineup inside ChatGPT — and you may have noticed.

In February and March, ChatGPT retired GPT‑4o, GPT‑4.1, 4.1 mini, o4‑mini, and, more recently, the GPT‑5.1 variants across normal chats and GPTs. OpenAI says most users now default to GPT‑5.3 Instant or GPT‑5.4 equivalents. ChatGPT Enterprise workspaces keep limited access to some models for a bit longer, and these models remain available via the API for now.

If your prompts started behaving a little differently — or a custom GPT suddenly responded in a new voice — this is probably why. It’s a reminder to teams to pin model versions for business‑critical workflows, and to regression‑test prompts when a provider rotates models. Details come from OpenAI’s Help Center notice on model retirements and timing.

Before we wrap, two quick honorable mentions.

First, if you’re in the Apple‑plus‑Google camp, the Gemini for Mac beta — and talk of deep Siri upgrades later in 2026 — suggest we could see converging user experiences across platforms, where your assistant can securely see and act on what’s on screen. Great for productivity... but expect renewed debates about consent and data minimization.

Second, on the infra side, memory bottlenecks aren’t going away — AMD and Samsung’s alignment underscores how much of 2026’s AI progress hinges not just on model breakthroughs, but on moving bytes faster and cheaper through the stack.

That’s the rundown: Google’s Gemini heads native on Mac, AMD and Samsung tighten their AI hardware handshake, Microsoft productizes agents with the Frontier Suite, Meta buys Moltbook to explore agent social fabrics, and OpenAI retires older ChatGPT models in favor of the 5.x family. We’ll keep tracking how these threads connect — because the through‑line is clear: AI is getting closer to your work, your devices, and your data... and the stakes are getting higher for performance, privacy, and governance.

Thanks for listening and a quick disclaimer, this podcast was generated and curated by AI using my and my kids' cloned voices, if you want to know how I do it or want to do something similar, reach out to me at emad at ai news in 10 dot com that's ai news in one zero dot com. See you all tomorrow.