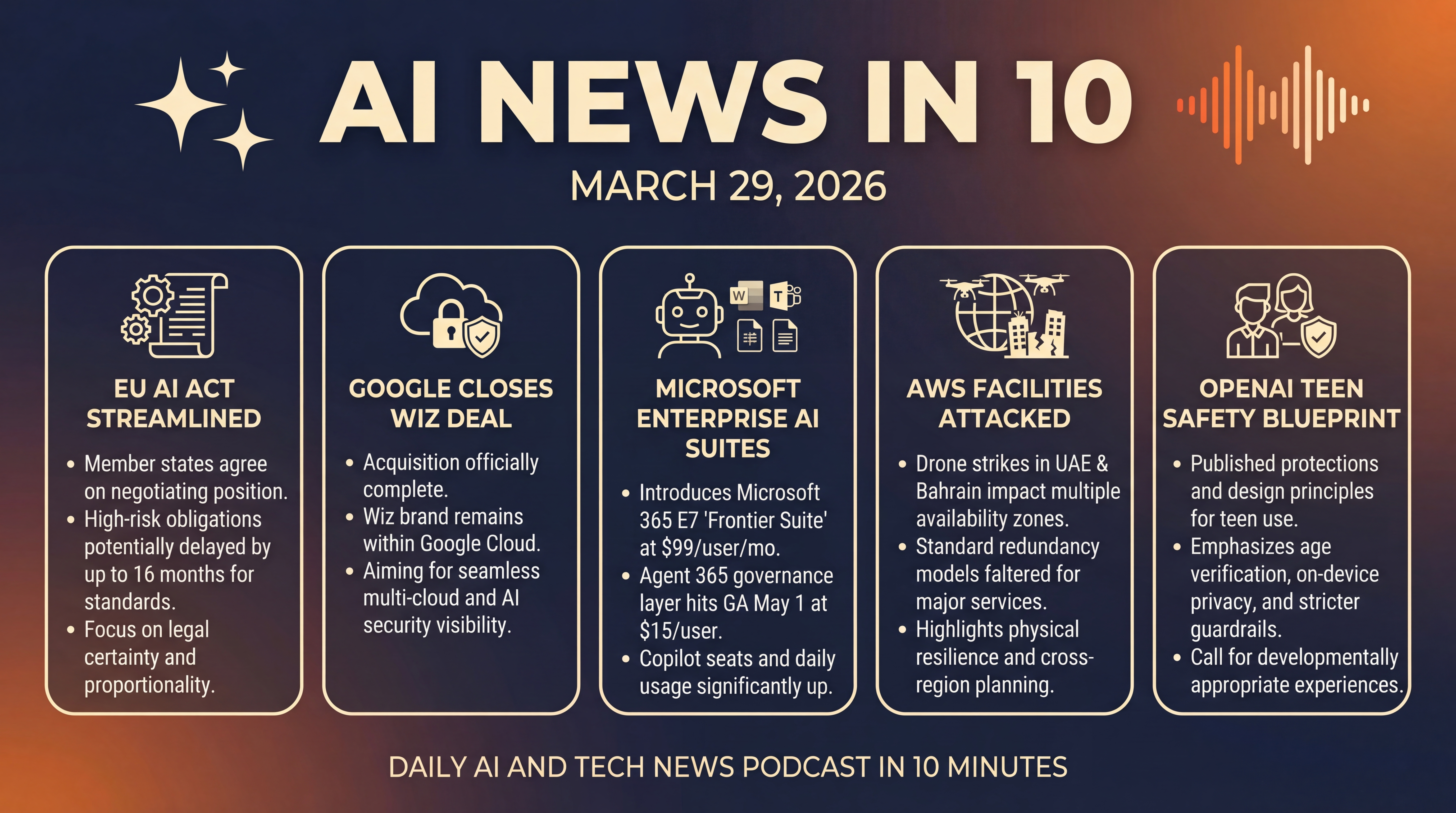

AI Rulebook, Cloud Security, and Real Resilience

From Brussels to Bahrain, we break down Europe’s AI Act timing tweaks, Google’s Wiz close, Microsoft’s Frontier Suite and Agent 365, the AWS data center attacks and what they mean for resilience, and OpenAI’s Teen Safety Blueprint. Actionable takeaways for product, security, and policy teams heading into the week.

Episode Infographic

Show Notes

Welcome to AI News in 10, your top AI and tech news podcast in about 10 minutes. AI tech is amazing and is changing the world fast, for example this entire podcast is curated and generated by AI using my and my kids cloned voices...

It’s Sunday, March 29, 2026, and we’ve got a weekend rundown that sets the table for the week ahead... from fresh movement on Europe’s AI rulebook, to a big cloud security deal that just closed at Google, to Microsoft’s latest enterprise AI bundle and agent tools.

We’ll also look at what a recent attack on AWS facilities means for resilience planning — and we’ll wrap with a noteworthy safety blueprint aimed at teen users of AI. Let’s get into it.

[BEGINNING_SPONSORS]

First up, Brussels is still reshaping how — and how fast — the EU’s landmark AI Act will fully bite, and that matters for anyone selling or deploying AI in Europe. Earlier this month, member states agreed on a negotiating position to streamline parts of the rulebook.

The Council’s mandate backs the Commission’s proposal to adjust when the most demanding high-risk obligations actually apply — by as much as 16 months — so they kick in only after the right standards and compliance tools exist. The aim is legal certainty, proportionality, and fewer fragmented interpretations across member states.

The package would also extend some exemptions beyond SMEs to small mid-caps, add limited allowances to process sensitive data for bias detection and mitigation, and reinforce the new AI Office’s powers. If you’re timing your compliance roadmap, this could buy you some breathing room — but you’ll also see tighter guidance and more centralized oversight.

Zooming out for a second on timing... most AI Act rules are set to apply broadly on August 2, 2026, with some provisions phasing in earlier or later. That baseline hasn’t changed — but the streamlining push could shift certain high-risk timelines to line up with the publication of harmonized standards and assessment tools. Translation for product teams: keep building toward August 2026, but expect targeted schedule tweaks — and clearer guidance to cut the gray areas.

Story two... Google’s cloud security bet just got bigger. Google has officially closed its acquisition of Wiz, the fast-growing cloud and AI security platform. Wiz will keep its brand inside Google Cloud, and the goal is to help customers secure workloads across any cloud — while acknowledging the obvious trend: as organizations race into multicloud and AI, attackers are also using AI to move faster.

That’s the thesis behind plugging Wiz into Google’s stack. If you’re a CISO watching AI agents proliferate inside your enterprise, this deal underscores how platform vendors think security posture has to be real-time, graph aware, and cloud agnostic.

A quick strategic read here... with Wiz in the fold, Google can pair native cloud controls with Wiz’s visibility and risk prioritization model, then layer AI-assisted detection and response on top. Expect tighter integrations across asset inventory, vulnerability management, data lineage, and identity — plus marketing pressure on rivals to match. If you were expecting a cooling period in cloud security M and A, this close says otherwise.

Third, Microsoft keeps pushing enterprise AI from pilots to production at price points CFOs can model. Earlier this month, Redmond rolled out Microsoft 365 E7 — the Frontier Suite — priced at 99 dollars per user per month, and said Agent 365 — its governance layer for AI agents — hits general availability May 1 at 15 dollars per user.

Microsoft also highlighted Wave 3 updates to Microsoft 365 Copilot and model diversity that now includes Claude alongside next generation OpenAI models. The stat that jumped out: paid Copilot seats are up more than 160 percent year over year, daily active usage is up 10 times, and the number of customers deploying Copilot at 35,000 plus seats has tripled — figures meant to convince skeptics that enterprise AI isn’t just a science project anymore.

Under the hood, Microsoft says it’s already tracking more than 500,000 internal agents, with the busiest handling research, coding, sales intelligence, customer triage, and HR self-service. Over the past 28 days, those agents generated more than 65,000 responses per day for employees.

Why that matters today: if you’re sketching an adoption plan, the bundle math is getting easier — productivity suite, copilots, and an agent registry that your security team can actually govern. The next gating factor is less licensing, and more change management... how you define work IQ, instrument it, and keep it compliant.

[MIDPOINT_SPONSORS]

Fourth, resilience got a real-world stress test this month — and it wasn’t just a routine cloud outage. On March 1, drone strikes claimed by Iran’s Islamic Revolutionary Guard Corps hit three AWS data center facilities across the UAE and Bahrain, critically impairing two out of three availability zones in the UAE region, and one zone in Bahrain.

Because multiple zones went down at once, standard redundancy models faltered — impacting banks, payments platforms, a major data cloud, and a dominant ride-hailing app in the region. Analysts call it the first publicly confirmed military attack on a hyperscale cloud provider. For architects, that’s a forcing function: diversify regions, interrogate physical co-tenancy risk, and model simultaneous loss of availability zones — not just logical failover.

It also reframes the shared responsibility model when geopolitical risk targets the physical substrate. Expect RFPs — requests for proposals — to start asking for detailed cross-region blast radius modeling, greater transparency into adjacency and inter-availability-zone dependencies, and clearer service level agreements that contemplate kinetic disruptions.

And for AI teams — whose training or retrieval pipelines anchor to a single region — it’s a reminder to build for graceful degradation... throttled inference, cached embeddings, and multi-region fallbacks that don’t quietly die when DNS or power distribution fails in pairs.

Fifth, safety for younger users continues to gather momentum. This month, OpenAI published a Teen Safety Blueprint — a set of protections and design principles for teen use of generative AI. While many countries are revisiting age-appropriate design codes and platform obligations, the blueprint signals that model providers will be expected to go beyond generic content filters — delivering developmentally appropriate experiences, clearer disclosures, tuned default behaviors, and audit-ready oversight mechanisms.

If you’re rolling out AI to teens or family accounts, here’s a practical checklist inspired by what’s surfacing in these blueprints.

Verify age without over-collecting data.

Favor on-device privacy settings and easy-to-use reporting.

Use stricter guardrails for image and voice generation.

Publish safety-incident metrics so parents, educators, and regulators can see whether your safeguards actually work.

It’s the difference between trust us... and trust, but verify.

Quick recap... Europe is tuning the glide path for AI compliance — pay attention to those high-risk timelines and forthcoming guidance. Google’s Wiz deal raises the bar for cloud-native security in an AI world. Microsoft’s 99 dollar Frontier Suite and Agent 365 point to governed agent ecosystems at scale. The AWS strikes in the Gulf sharpen industry’s thinking on physical resilience for cloud and AI workloads. And OpenAI’s Teen Safety Blueprint reminds everyone building for minors that safety by design isn’t optional — it’s table stakes. We’ll keep watching these as they evolve into the week ahead.

Thanks for listening and a quick disclaimer, this podcast was generated and curated by AI using my and my kids' cloned voices, if you want to know how I do it or want to do something similar, reach out to me at emad at ai news in 10 dot com that's ai news in one zero dot com. See you all tomorrow.