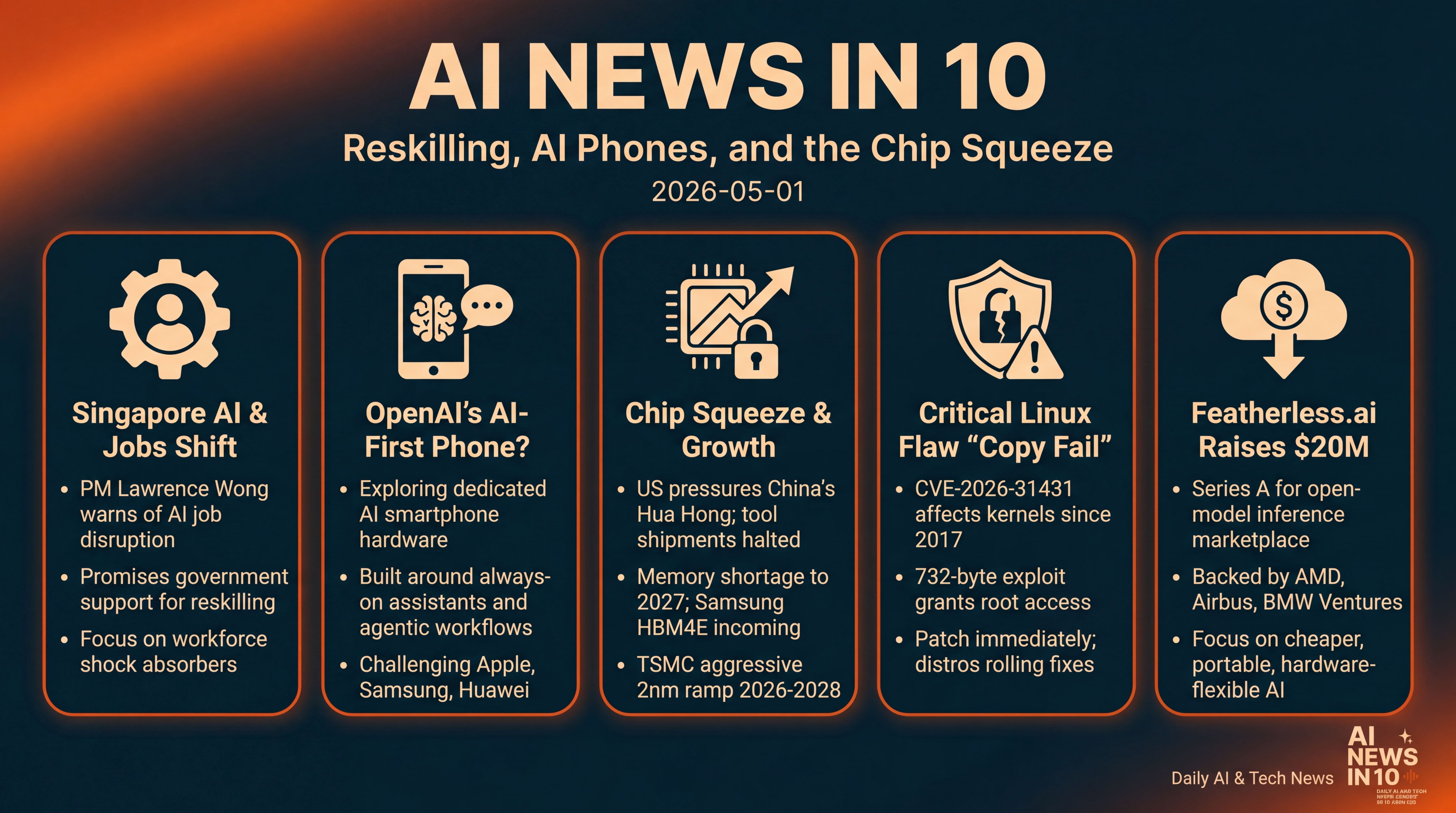

Reskilling, AI Phones, and the Chip Squeeze

Singapore readies workers for AI’s impact while OpenAI explores an AI-first phone. We run through chip pressures and standards, a serious Linux root flaw to patch now, and fresh funding for cheaper open-model inference.

Episode Infographic

Show Notes

Welcome to AI News in 10, your top AI and tech news podcast in about 10 minutes. AI tech is amazing and is changing the world fast, for example this entire podcast is curated and generated by AI using my and my kids cloned voices...

It’s Friday, May first. Here’s your quick tour of the day’s biggest AI and tech moves.

Singapore’s prime minister warns AI will reshape jobs—and promises support for reskilling. Bloomberg says OpenAI is exploring an AI‑first smartphone. In chips, the United States tightens pressure on China’s Hua Hong, memory standards inch forward, and TSMC signals a strong two‑nanometer ramp. A new Linux flaw called Copy Fail can grant root on major distros... patches are rolling out. And Featherless.ai just raised twenty million dollars to make open‑model inference cheaper.

Let’s dive in.

[BEGINNING_SPONSORS]

First up—Singapore. In a May Day address, Prime Minister Lawrence Wong said the country faces bigger disruptions ahead from geopolitics and the rise of AI, warning that jobs will change, some will disappear, and the pace of change will be faster than before. He paired the warning with a promise: government support to help workers reskill and adapt. It’s a notable framing—less about hype, more about workforce shock absorbers—and it hints at how export‑driven economies plan to ride the AI wave without leaving people behind.

Story two—the pocket computer is getting a rethink. Bloomberg reports OpenAI is actively exploring an AI‑first smartphone—not just a better app, but hardware built around always‑on assistants, fast on‑device inference, and the new agentic workflows many of us are testing at work. The idea would throw OpenAI into a lane long dominated by Apple and Samsung—and Huawei internationally. The company has reportedly been laying groundwork with high‑profile design talent and considering what a post‑app‑grid experience could look like... think intent‑driven actions rather than tapping through folders. If this lands, watch two battlegrounds: who controls the default model on the device, and how much of the AI runs on‑device versus in the cloud.

Now, a packed chip check. SemiEngineering’s Week in Review highlights a few threads that matter if you’re building or buying AI capacity right now.

Washington just put fresh pressure on China’s domestic chip build‑out—the U.S. Commerce Department told toolmakers to halt shipments to Hua Hong Group, the country’s number‑two foundry, to protect U.S. leads at advanced nodes. If you’re modeling availability risk for capacity in 2026 and 2027, pencil in tighter tool access on the mainland.

Memory and packaging remain chokepoints. The roundup flags a widening memory shortage into 2027, and Samsung plans to sample HBM4E this quarter—a reminder that every hyperscale GPU rack still lives or dies on advanced memory, not just the compute chips.

Standards keep inching forward. JEDEC published a new DDR5 multiplexed rank data buffer spec—one of those under‑the‑hood changes that squeezes more bandwidth for training and inference without ripping and replacing entire platforms. Expect incremental capacity and utilization wins for data‑center fleets as vendors adopt it.

And TSMC is guiding aggressive growth at two nanometers, with capacity projected to climb rapidly from 2026 through 2028—a signal that bleeding‑edge wafer supply should loosen a bit even as AI demand keeps roaring. Put differently... the foundry staircase is rising, but so is the load we’re placing on it.

[MIDPOINT_SPONSORS]

Fourth, a heads‑up for Linux admins and anyone running containers at scale. A newly disclosed local‑privilege‑escalation bug nicknamed Copy Fail—tracked as CVE‑2026‑31431—affects Linux kernels going back to 2017. Researchers at Theori say a 732‑byte exploit reliably gets root by abusing a logic bug in the kernel’s crypto template, enabling a controlled four‑byte write via a crypto socket interface and the splice system call. Patches landed upstream in early April, and distros are rolling fixes now—but many systems won’t be current yet. Prioritize multi‑tenant hosts, CI runners, and Kubernetes clusters. If you can’t patch immediately, disabling the kernel’s AEAD algorithm interface module is a viable stopgap. This one is closer to Dirty Pipe in practicality than your average local privilege escalation... so don’t sleep on it.

And fifth, a funding note that points to where the market’s headed. Featherless.ai raised twenty million dollars in Series A funding, co‑led by AMD Ventures and Airbus Ventures, with BMW i Ventures and others participating. The company pitches a production‑ready alternative to proprietary AI stacks—a marketplace for specialized open models and deeper integration across diverse hardware to drive down inference costs. The mix of investors here is telling—silicon, aerospace, and autos—which tracks with what we’re hearing from enterprises that want portability, lower unit costs, and the freedom to swap models and accelerators without rewriting the world.

Quick recap... Singapore is bracing its workforce for rapid AI change. OpenAI may try to reshape the phone around assistants. Chips remain the fulcrum—from U.S. export controls to memory and standards. Linux shops should patch the Copy Fail vulnerability now. And venture dollars are flowing into open, hardware‑flexible AI stacks.

That’s your AI News in 10 for Friday, May first—see you tomorrow.

Thanks for listening and a quick disclaimer, this podcast was generated and curated by AI using my and my kids' cloned voices, if you want to know how I do it or want to do something similar, reach out to me at emad at ai news in 10 dot com that's ai news in one zero dot com. See you all tomorrow.